Why Healthcare AI Is Not Just “Innovation”

Healthcare AI is almost always described as an innovation.

Smarter diagnostics.

More efficient workflows.

Expanded access.

Lower costs.

This framing is comforting… and incomplete.

Because most healthcare AI systems are not adopted when systems are healthy.

They are adopted when systems are strained.

Healthcare AI is not entering a vacuum of possibilities.

It is entering a care vacuum.

Scarcity Is the Hidden Driver

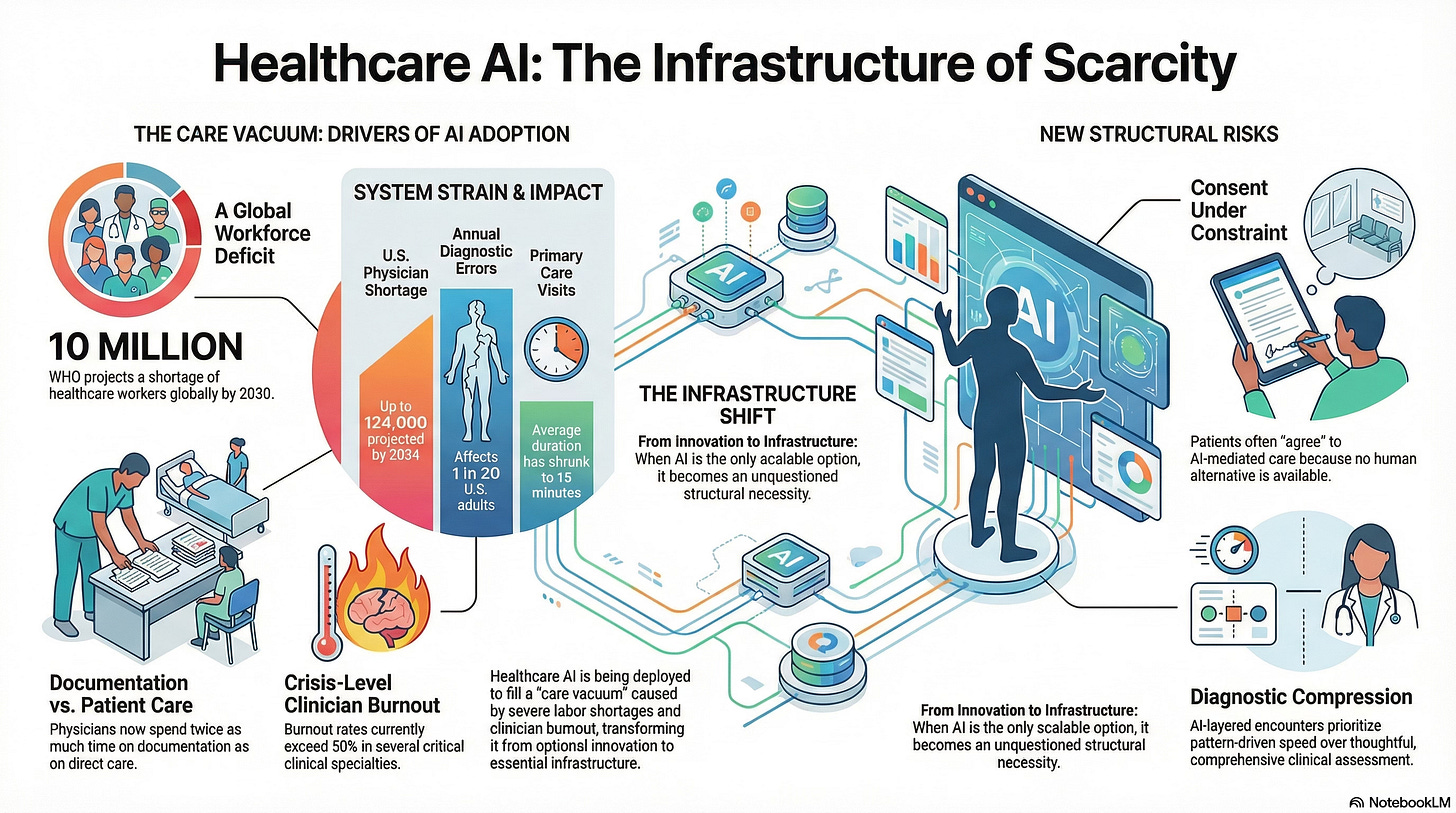

Across healthcare systems, the same pressures recur, and they are measurable.

In the United States alone:

The Association of American Medical Colleges projects a shortage of up to 124,000 physicians by 2034, with primary care and behavioral health most affected.

Physicians now spend nearly twice as much time on documentation as on direct patient care, according to time-motion studies.

Burnout rates exceed 50% in several clinical specialties, with emotional exhaustion cited as a leading driver of attrition.

Globally, the World Health Organization estimates a shortage of 10 million healthcare workers by 2030, disproportionately affecting low- and middle-income countries.

AI enters precisely here.

Not because care systems are flourishing—but because they are nearing capacity.

AI Is Filling Gaps, Not Replacing Excellence

Look at where AI is first deployed:

automated symptom triage,

radiology pre-reads,

clinical risk scoring,

mental health chat support.

These are not prestige use cases.

They are pressure points.

In many systems, AI-mediated triage is now the default front door, not an optional add-on—because appointment wait times stretch from weeks to months.

When AI becomes the only scalable option, it ceases to be innovative.

It becomes infrastructure.

Infrastructure is hard to question once dependency sets in.

Diagnostic Compression Is a Structural Shift

Primary care visit lengths have steadily decreased, even as patient complexity increases. In the U.S., average visit times hover around 15 minutes, during which clinicians must:

assess symptoms,

review history,

document extensively,

and make decisions with downstream consequences.

Diagnostic error rates are estimated to affect at least 1 in 20 adults annually, often not because clinicians lack intelligence, but because systems lack time.

AI diagnostic support is introduced to improve accuracy.

But when it is layered onto compressed encounters, it also reshapes:

What is noticed,

What is escalated,

And what is deferred?

Diagnosis becomes narrower, faster, and more pattern-driven, not necessarily more thoughtful.

Consent Under Constraint Is Not Free Choice

Consent assumes alternatives.

But many patients now encounter AI-mediated care because:

Primary care panels are full,

Specialist wait times exceed months,

Or geographic access is limited.

In mental health, for example, AI-based conversational tools often appear not as complements, but as the only immediate option.

When access to human care is constrained, consent shifts:

from deliberative,

to acquiescent.

Patients may technically agree, but under conditions they did not choose.

Governance frameworks that treat this consent as ethically sufficient misunderstand the system context in which it occurs.

Governance Is Lagging Reality

Regulatory structures have not kept pace with deployment.

Most healthcare AI tools are regulated as:

Decision support,

Workflow optimization,

Or low-risk clinical aids.

Yet in practice, they:

Shape prioritization,

Influence diagnosis,

And affect access to care.

Accountability is often diffuse:

Vendors disclaim clinical responsibility,

Clinicians inherit liability without authority,

Institutions prioritize throughput metrics over safeguards for judgment.

This is not malice.

It is structural lag.

Why Governance Must Begin with Scarcity

Most AI governance debates start with model properties:

Bias,

Explainability,

Accuracy.

In healthcare, the first question must be simpler and harder:

Which system failure necessitated this tool?

If AI is compensating for scarcity, then governance must address:

Constrained choice,

Asymmetrical dependency,

And accelerated normalization.

That requires:

Explicit boundaries on substitution vs. support,

Escalation paths when uncertainty exceeds thresholds,

Protection for clinicians who override systems,

And pacing mechanisms that preserve human comprehension.

Without these, AI does not merely assist care; it quietly redefines it.

A Cognitive Age Reframe

The Cognitive Age is not defined by smarter tools.

It is defined by how institutions govern intelligence under pressure.

Healthcare is the clearest test.

If we treat AI solely as an innovation, we obscure the forces driving its adoption and the risks it introduces.

If we treat AI as a scarcity response, governance becomes unavoidable.

Not as ethics theater.

As infrastructure.

The "adopted by necessity, not by design" framing is the one that sticks. Once the dependency is structural, the evaluation window is already gone. I see the same thing with LLM rollouts in enterprise - adopted under deadline pressure, justified later by inertia.