Why Consent Breaks Down in Healthcare AI and What Must Replace It

Consent is often treated as the moral foundation of modern healthcare.

We ask patients to agree.

We document their approval.

We reassure ourselves that participation is voluntary.

In AI-enabled healthcare, this framework is increasingly inadequate.

Not because people refuse consent, but because the conditions under which consent is given have fundamentally changed.

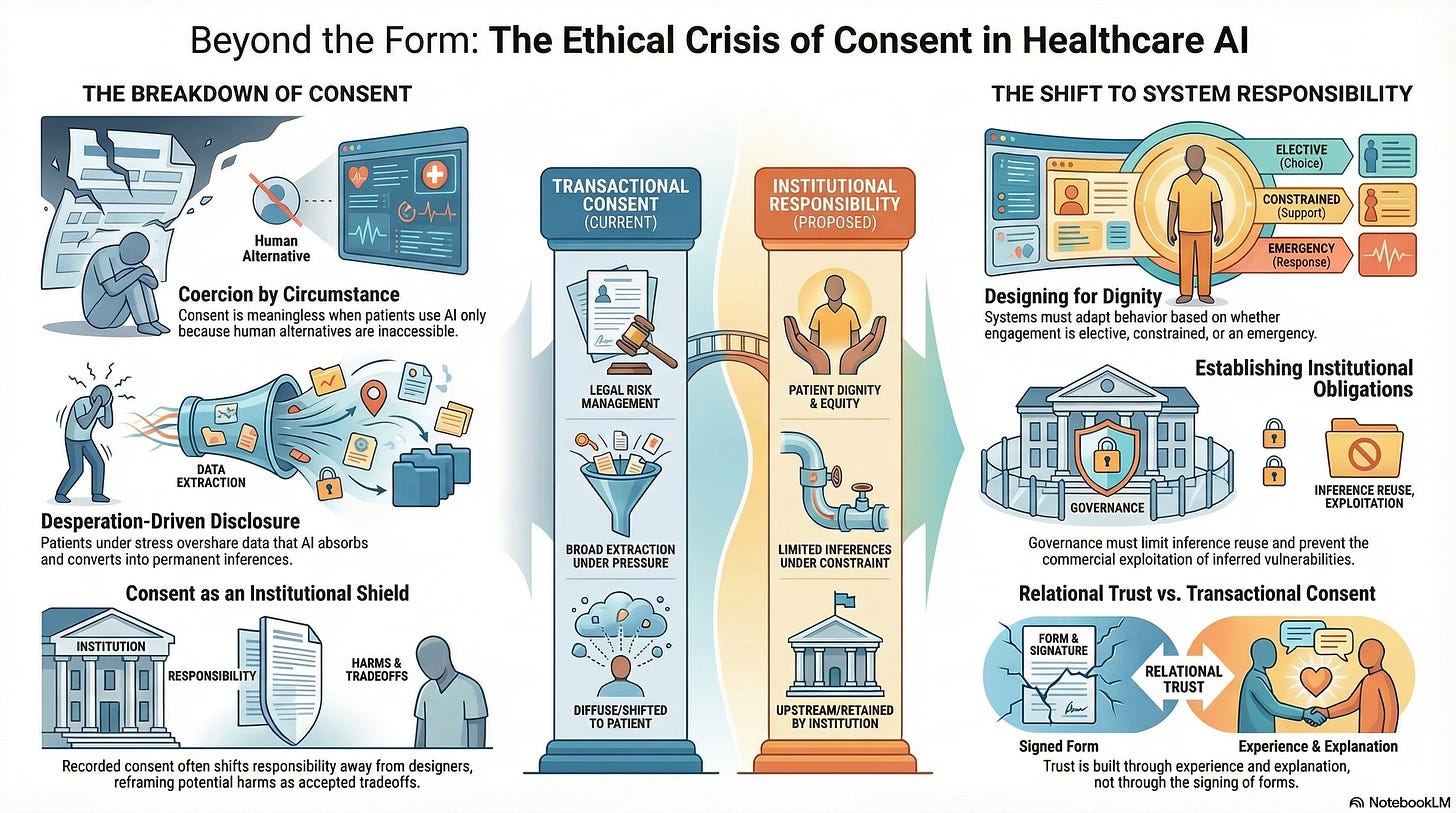

Consent Presumes Choice. Healthcare Scarcity Removes It.

Consent is meaningful only when refusal is viable.

In many healthcare contexts today, refusal is not.

Patients turn to AI-driven systems not because they prefer them, but because alternatives are unavailable:

Clinics are understaffed

Wait times are measured in months

Specialists are geographically inaccessible

Costs exceed reach

Under these conditions, consent becomes conditional: consent to receive care or to forgo care.

This is not coercion in the legal sense.

It is coercion by circumstance.

And systems built on circumstantial consent are ethically unstable.

Disclosure Under Duress Is Not Neutral Data

When people seek help under constraint, they disclose differently.

They share more.

They overshare.

They reveal fears, behaviors, and uncertainties they would otherwise hold back.

This is not trust-driven transparency.

It is desperation-driven disclosure.

AI systems absorb this information without context. They convert it into inferences that shape care pathways, risk classifications, and future access.

The problem is not that systems collect data.

It is that they do so in environments where refusal incurs costs.

Calling this “voluntary participation” obscures the moral reality of the exchange.

The Illusion of Informed Consent

Healthcare AI consent processes often emphasize disclosure:

Terms of use

Data handling policies

Algorithmic involvement statements

But understanding that an AI system is involved is not the same as understanding how inference will be used.

Most patients cannot reasonably anticipate:

How long inferences persist

Who has access to them

How they shape downstream decisions

Whether they can be challenged or reversed

Consent that cannot be meaningfully informed is procedural, not ethical.

When Consent Becomes a Shield for Institutions

Consent mechanisms often function less as protections for patients and more as risk management tools for organizations.

Once consent is recorded:

Responsibility shifts away from system designers

Harms are reframed as accepted tradeoffs

Accountability becomes diffuse

This is particularly dangerous in healthcare, where individuals lack bargaining power and institutional asymmetry is extreme.

Consent should protect the vulnerable.

When it protects the system instead, it has failed.

Trust Is Not Created by Forms

Trust emerges from experience.

Patients trust systems when they:

Feel understood rather than processed

Receive explanations rather than outcomes

Experience continuity rather than fragmentation

Can question decisions without penalty

AI systems that rely on consent alone to establish legitimacy fundamentally misunderstand trust.

Trust is relational.

Consent is transactional.

Healthcare AI systems that substitute the latter for the former will struggle to sustain legitimacy over time.

Why Speed Makes Consent More Fragile

As AI systems accelerate care pathways, consent windows shrink.

Decisions are made:

Before patients fully understand options

While individuals are under stress

Without time for reflection or discussion

Speed amplifies power asymmetry.

It privileges system momentum over human deliberation.

In such environments, consent becomes a formality, a speed bump quickly cleared rather than a meaningful pause.

Toward Governance Beyond Consent

If consent cannot carry the ethical weight we place on it, what must replace it?

The answer is not less autonomy.

It is a stronger system responsibility.

Healthcare AI governance must shift emphasis from individual consent to institutional obligation.

This includes:

Limiting what inferences can be drawn under constraint

Restricting the reuse of inferences beyond their original care context

Preventing commercial exploitation of inferred vulnerability

Ensuring patients are not penalized for disengaging

In other words, systems must assume responsibility for the power they hold, regardless of whether consent was obtained.

Designing for Dignity Under Constraint

Responsible healthcare AI systems recognize that not all consent contexts are equal.

They distinguish between:

Elective engagement

Constrained engagement

Emergency engagement

And they adapt system behavior accordingly.

This may include:

Reduced inference scope in high-distress contexts

Delayed secondary use of data

Mandatory human review before consequential decisions

Explicit expiration of inferences tied to crisis moments

These are governance choices, not technical limitations.

Why This Matters Now

Healthcare AI adoption is accelerating fastest in precisely those environments where choice is most constrained:

Under-resourced systems

Marginalized populations

Crisis-driven care settings

If consent is treated as sufficient in these contexts, systems will quietly normalize the extraction of data under pressure.

That normalization will be difficult to undo.

The Deeper Shift Required

The ethical foundation of healthcare AI cannot rest solely on consent.

It must rest on a recognition that:

Vulnerability alters agency

Scarcity reshapes choice

Speed amplifies imbalance

In such conditions, responsibility must shift upstream, from individuals to institutions, and from permission to design.

Consent remains necessary.

But it is no longer sufficient.

What Comes Next

If consent cannot anchor accountability, then the next question becomes unavoidable:

Who is responsible when AI-enabled healthcare systems cause harm, and how is that responsibility enforced?

That is where we turn next.