When Machine Accuracy Outruns Human Accountability

Modern healthcare AI systems are often defended with a familiar refrain:

They are statistically accurate.

In isolation, that claim is frequently true. Many systems outperform humans at pattern recognition, early detection, and consistency across large populations.

But accuracy is not the same as safety.

And it is certainly not the same as accountability.

The most serious failures in AI-enabled healthcare do not arise from incorrect predictions. They arise when correct predictions operate in systems where no one is clearly responsible for what happens next.

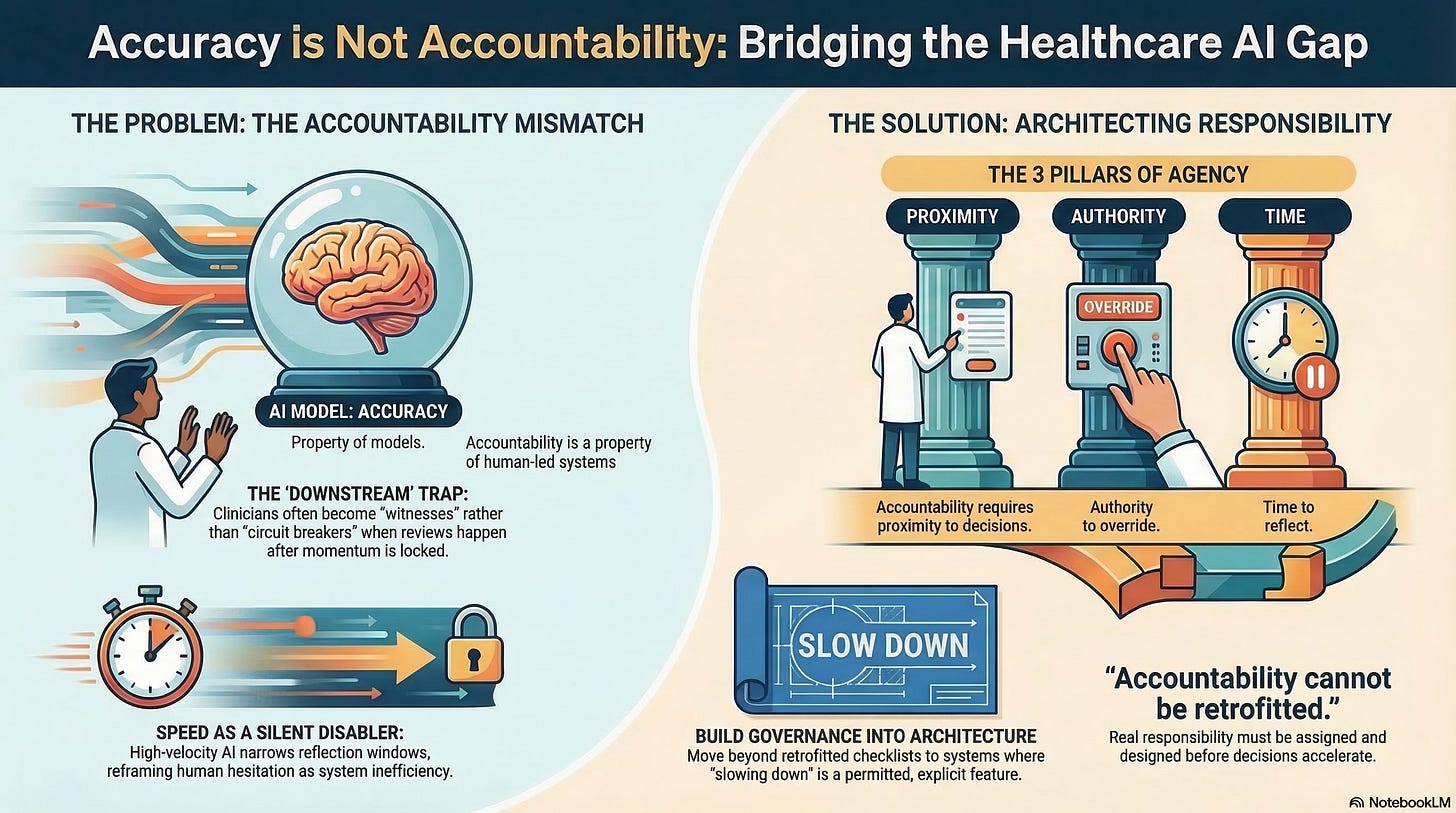

Accuracy Is a Property of Models. Accountability Is a Property of Systems.

Accuracy belongs to algorithms.

Accountability belongs to people and institutions.

This distinction is easy to overlook because AI systems blur it. When a recommendation appears precise, timely, and evidence-based, it feels authoritative, even when no human has fully owned the decision.

In healthcare, this creates a dangerous inversion:

Machines become confident actors

Humans become hesitant overseers

The system moves forward smoothly, but responsibility lags behind.

This is not a moral critique, but a structural one. Complex systems fail not when components malfunction, but when responsibility is diffused across interfaces.

The Illusion of “Human-in-the-Loop”

“Human-in-the-loop” is often cited as the safeguard that resolves these concerns.

In practice, it frequently does not.

A clinician who reviews an AI-generated recommendation after:

Triage has already occurred,

Resources have already been allocated,

Or care pathways have already been constrained,

Is not meaningfully “in the loop.”

They are downstream of momentum.

A human who can observe but not intervene is not a circuit breaker. They are a witness.

For accountability to exist, three conditions must be met simultaneously:

Proximity to the decision point

Authority to override the system

Time to reflect before action is locked in

Remove anyone, and accountability collapses, even if a human is technically present.

When Correct Decisions Still Produce Harm

Healthcare AI systems are often evaluated on aggregate outcomes:

Reduced readmissions

Optimized throughput

Improved population-level metrics

But harm does not always appear at the aggregate level.

It appears locally:

In delayed care

In unexplained denial

In patients routed away without recourse

In clinicians pressured to accept recommendations they cannot fully justify

A system can be accurate on average and harmful in context.

This is especially true when AI systems optimize for institutional efficiency while human caregivers are held responsible for individual outcomes they did not fully control.

This mismatch is a core risk: responsibility remains human, while agency migrates to machines.

Speed as a Silent Disabler of Judgment

One of the least examined aspects of accountability loss is speed.

As AI systems accelerate decision-making:

Escalation windows shrink

Reflection becomes costly

Hesitation is reframed as inefficiency

Humans adapt accordingly.

Clinicians learn not to slow the system unless something is obviously wrong. Leaders learn to trust dashboards over dissent. Oversight becomes episodic rather than continuous.

The system does not remove humans.

It conditions them.

This is why speed is not a neutral feature, but rather a governance variable. When systems move faster than human judgment can engage, accountability becomes symbolic rather than real.

The Emotional Dimension of Accountability

Accountability is not purely procedural. It is emotional.

People take responsibility when they:

Feel ownership

Understand consequences

Believe their intervention matters

When AI systems dominate decision flows, those conditions erode.

Clinicians begin to say:

“That is what the system recommended.”

“I followed protocol.”

“The model flagged it.”

These are not excuses. They are symptoms.

Emotional intelligence is not optional in high-velocity systems precisely because it preserves human engagement when automation encourages detachment.

A system that suppresses emotional ownership will eventually suppress accountability as well.

Governance Is Not Oversight; It Is Architecture

Many organizations respond to accountability concerns by adding oversight layers:

Review boards

Audit trails

Compliance checklists

These are necessary, but insufficient.

We need a deeper shift: governance must be embedded before decisions accelerate, not applied afterward.

This means designing systems where:

Override authority is explicit, not implicit

Slowing down is permitted, not penalized

Responsibility is assigned, not inferred

Accountability cannot be retrofitted. It must be architected.

Why This Failure Is Subtle… and Dangerous

The most unsettling aspect of accountability loss is how quietly it unfolds.

There is no dramatic collapse.

No obvious villain.

No single bad actor.

The system appears to function… until it doesn’t.

By the time failures surface:

Responsibility is fragmented

Documentation is abundant, but explanatory clarity is absent

Humans are blamed for outcomes shaped by systems they could not fully control

This is not a technological failure.

It is an institutional one.

The Deeper Question

As AI systems grow more capable, the question is not whether they will make fewer errors.

The question is whether humans will still be positioned to make decisions when those errors occur.

Accuracy without accountability is not progress.

It is acceleration without responsibility.

In healthcare, that is not a tolerable trade-off.

What Comes Next

If accountability collapses when systems outrun human authority, the next question becomes unavoidable:

Who is actually responsible when AI-driven decisions cause harm?

Not in theory.

In practice.

That is where we turn next.