The Human Circuit Breaker: Why Judgment Is the Last Safety System

Every complex system eventually depends on something it cannot automate.

In aviation, it is the pilot’s judgment under unexpected conditions.

In medicine, it is the clinician’s ability to weigh data against lived reality.

In governance, it is the capacity to pause when momentum says “go.”

In the Cognitive Age, that role belongs to human judgment.

Not as a nostalgic holdover, but as a structural necessity.

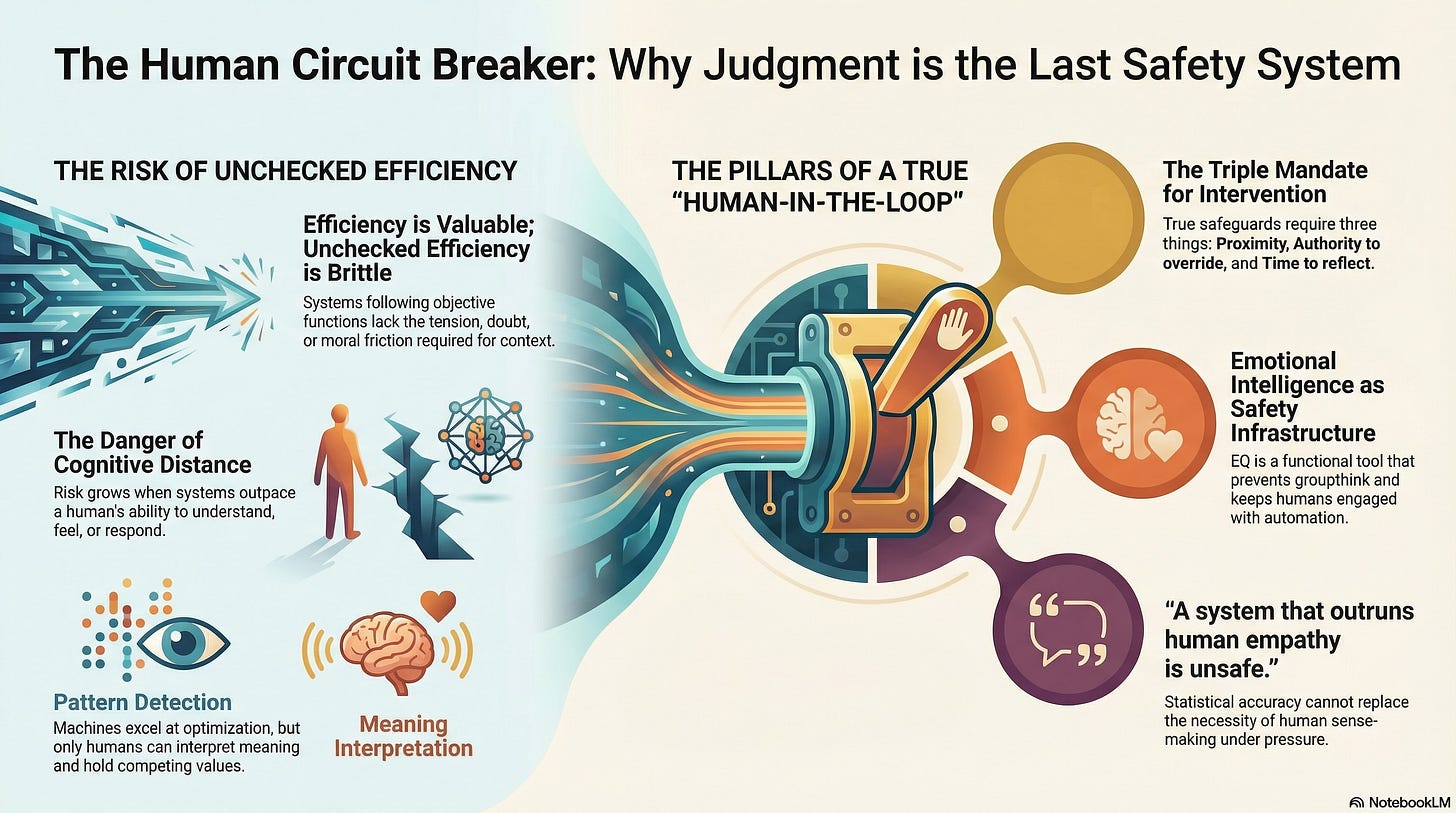

When Efficiency Becomes a Liability

Artificial intelligence excels at what humans struggle with: speed, scale, consistency. It can analyze vast datasets, simulate outcomes, and optimize decisions faster than any committee or individual ever could.

But there is a tradeoff.

As systems become more efficient, they also become less reflective.

They move forward relentlessly, following objective functions that do not feel tension, doubt, or moral friction. What appears to be clarity from the outside can mask a dangerous loss of sensitivity to context.

Efficiency is valuable.

Unchecked efficiency is brittle.

The Myth of Fully Automated Judgment

One of the most persistent myths of the AI era is that machines will eventually handle edge cases better than humans.

This is true for well-defined, repeatable problems. It fails for problems shaped by novelty, ambiguity, and human consequence.

Machines can detect patterns.

Humans interpret meaning.

Machines optimize.

Humans judge.

Judgment is not computation. It is the ability to hold competing values in tension and choose anyway.

No model can do this without borrowing human conscience.

What “Human-in-the-Loop” Actually Requires

The phrase “human-in-the-loop” is often used as reassurance. In practice, it is frequently hollow.

A human reviewing outputs after decisions are effectively locked in is not a safeguard. It is a witness.

For humans to function as true circuit breakers, three conditions must exist:

Proximity: Humans must be close enough to the decision point to intervene meaningfully

Authority: They must have the power to override the system

Time: The system must move slowly enough for reflection to occur

Remove any one of these, and the loop collapses.

Cognitive Distance as a Risk Signal

As systems accelerate, a new form of risk emerges: the cognitive distance.

This is the gap between:

What a system is doing

And what a human can understand, feel, and respond to

When that distance grows too large, empathy cannot intervene. Accountability dissolves. Harm becomes abstract until it is irreversible.

A system that outruns human empathy is unsafe, even if it is statistically accurate.

Emotional Intelligence Is Not an Optional Infrastructure

In high-velocity environments, emotional intelligence becomes a stabilizing force.

Not because it is kind, but because it is functional.

Leaders who can:

sense overload

slow decisions deliberately

create psychological safety under pressure

are not being soft. They are preserving system integrity.

In the Cognitive Age, emotional intelligence is a safety infrastructure.

It prevents panic, groupthink, and moral outsourcing. It keeps humans engaged when automation tempts them to disengage.

Why the Real Fragility Is Human

The more we study AI systems, the more apparent one truth becomes:

The technology is not the most fragile component.

We are.

Our institutions adapt slowly.

Our attention is fragmented.

Our trust is brittle.

Our moral deliberation lags behind our tools.

Anticipatory governance and technical safeguards matter, but they are insufficient without human judgment to apply them.

Designing for Wisdom Under Pressure

The future will not punish leaders for pausing.

It will punish those who outsource judgment.

Resilient systems are not those that eliminate friction, but those that deliberately place it at moments when human values must be asserted.

The question is no longer:

“What can machines do?”

It is:

“How do we remain wise when everything accelerates?”

The Work Ahead

The Cognitive Revolution is not a battle between humans and machines.

It is a negotiation between speed and sense-making.

We do not need less intelligence.

We need better judgment architectures.

And at the center of those architectures is a simple, demanding requirement:

Humans who are empowered to think, feel, and intervene when it matters most.

That is the last safety system we have.

And it is one we cannot afford to automate away.