The Clinician Apprenticeship Gap: How Automation Erodes Medical Judgment

Clinical judgment is not learned all at once.

It is formed slowly, through exposure to uncertainty, repetition, failure, and guided decision-making under supervision. Medicine has always relied on apprenticeship, not only to transfer knowledge but also to cultivate discernment.

That process is now under quiet strain.

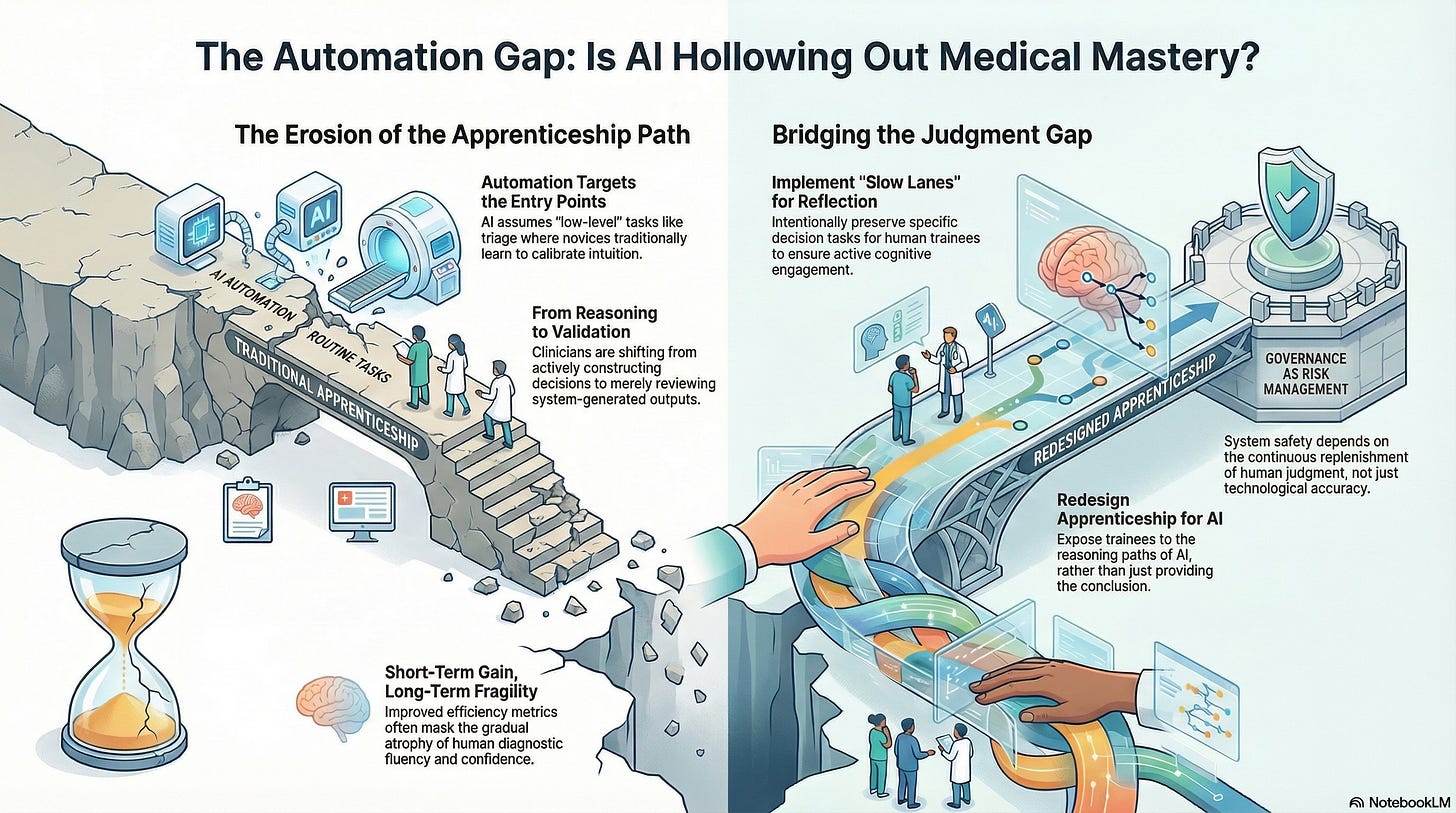

As AI systems assume greater roles in diagnostic, triage, and administrative tasks, they are reshaping not only how care is delivered but also how clinicians are trained. The risk is not that machines will replace doctors. It is that they will hollow out the pathways through which doctors learn to become good ones.

How Judgment Is Actually Built

Medical training is often described in terms of milestones, competencies, and certifications. But beneath those formal structures lies something less measurable: the formation of judgment.

Judgment develops when clinicians:

Encounter ambiguous cases

Make provisional decisions

Receive feedback from outcomes

Reflect with more experienced colleagues

Gradually internalize patterns, limits, and exceptions

This process depends on exposure, especially to early-stage decision-making. It is where intuition is calibrated, and ethical responsibility takes shape.

Remove that exposure, and judgment does not disappear immediately.

It simply stops developing.

Automation Targets the Entry Points

AI systems are disproportionately deployed at the front end of clinical work:

Symptom assessment

Risk stratification

Diagnostic suggestions

Documentation and coding

Triage and routing

These tasks are often framed as “low-level” or “routine.” In reality, they are the very tasks through which novices learn to think.

Early-career clinicians historically cut their teeth on precisely these moments:

Deciding what to ask next

Forming differential diagnoses

Sensing when a patient does not fit the template

Learning when uncertainty matters more than speed

When automation absorbs these layers, trainees inherit conclusions without participating in the reasoning that produced them.

The Shift from Doing to Reviewing

In highly automated environments, the role of the clinician subtly shifts.

Instead of actively constructing decisions, they are asked to:

Review system-generated outputs

Confirm recommendations

Escalate only when something feels obviously wrong

This changes the cognitive posture of clinical work.

Reviewing is not the same as reasoning.

Validation is not the same as judgment.

Over time, clinicians become skilled at monitoring systems rather than interrogating situations. The system appears to reduce error, but it also reduces opportunities to learn from near-misses, misclassifications, and uncertainty.

Why This Gap Is Hard to See

The apprenticeship gap is difficult to detect because short-term outcomes often improve.

Automation:

Reduces workload

Increases consistency

Improves average performance metrics

From an institutional perspective, this appears to be progress.

But judgment is a long-cycle capability. Its absence does not register immediately. It becomes visible only when:

Novel cases arise

Systems fail at the edges

Human intervention is urgently required

At that point, the question is no longer whether clinicians are present, but whether they are prepared.

Skill Atrophy Is a Systemic Risk

In aviation, pilots train extensively for rare failure modes precisely because automation handles most routine tasks. Healthcare has not yet adopted comparable compensatory structures.

As AI systems absorb more cognitive labor, clinicians risk losing:

Diagnostic fluency

Confidence in override decisions

Comfort with uncertainty

Ethical deliberation under pressure

This is not a critique of individual clinicians. It is a predictable outcome of system design.

Skills that are not practiced do not remain sharp.

Judgment that is not exercised does not deepen.

The False Promise of “Freeing Clinicians to Do What Matters”

A common argument in favor of automation is that it frees clinicians to focus on “higher-value” tasks, empathy, communication, and complex decision-making.

In principle, this is appealing.

In practice, it often fails.

When entry-level cognitive work is automated without intentional redesign of training pathways, clinicians are expected to make complex judgments without having fully developed those skills.

You cannot skip the apprenticeship and still expect mastery.

Empathy without judgment is not care.

Complex decisions without grounding are not wisdom.

Responsibility Without Preparation

One of the most troubling dynamics emerges when accountability remains human while judgment formation erodes.

Clinicians remain legally and ethically responsible for outcomes, even as:

Decision latitude narrows

System recommendations dominate

Opportunities to practice independent reasoning decline

This creates a dangerous asymmetry: responsibility without preparation.

Over time, this contributes to:

Moral distress

Professional burnout

Defensive reliance on systems

Erosion of trust between clinicians and institutions

A system that demands responsibility must also protect the conditions under which responsibility can be exercised competently.

Designing Apprenticeship for the Cognitive Age

If automation is here to stay, and it is, then apprenticeship must be deliberately redesigned, not implicitly sacrificed.

This requires intentional choices:

Preserving certain decision tasks for human trainees

Creating “slow lanes” where reflection is required

Exposing trainees to reasoning paths, not just outputs

Rewarding questioning rather than compliance

Judgment does not emerge spontaneously from oversight roles.

It must be cultivated.

Healthcare systems that fail to do this may achieve efficiency in the short term, but fragility in the long term.

Why This Is a Governance Issue

The apprenticeship gap is not merely an educational concern. It is a governance concern.

A system that cannot replenish human judgment is unsafe by design.

Governance must therefore address not only what AI systems do today but also the kind of professionals they are shaping for tomorrow. Decisions about automation are decisions about future capability.

Ignoring that fact is itself a form of risk outsourcing.

Looking Ahead

As clinicians transition from hands-on decision-makers to system supervisors, the definition of clinical expertise shifts.

Understanding that shift and deciding where to draw boundaries are essential if healthcare is to remain a human practice supported by technology, rather than a technological practice supervised by humans.

That transition and its implications are where we turn next.