Patient Outcomes vs. Institutional Momentum: When Optimization Becomes the Enemy of Care

Healthcare systems rarely fail because people do not care.

They fail because the systems surrounding them optimize for the wrong things.

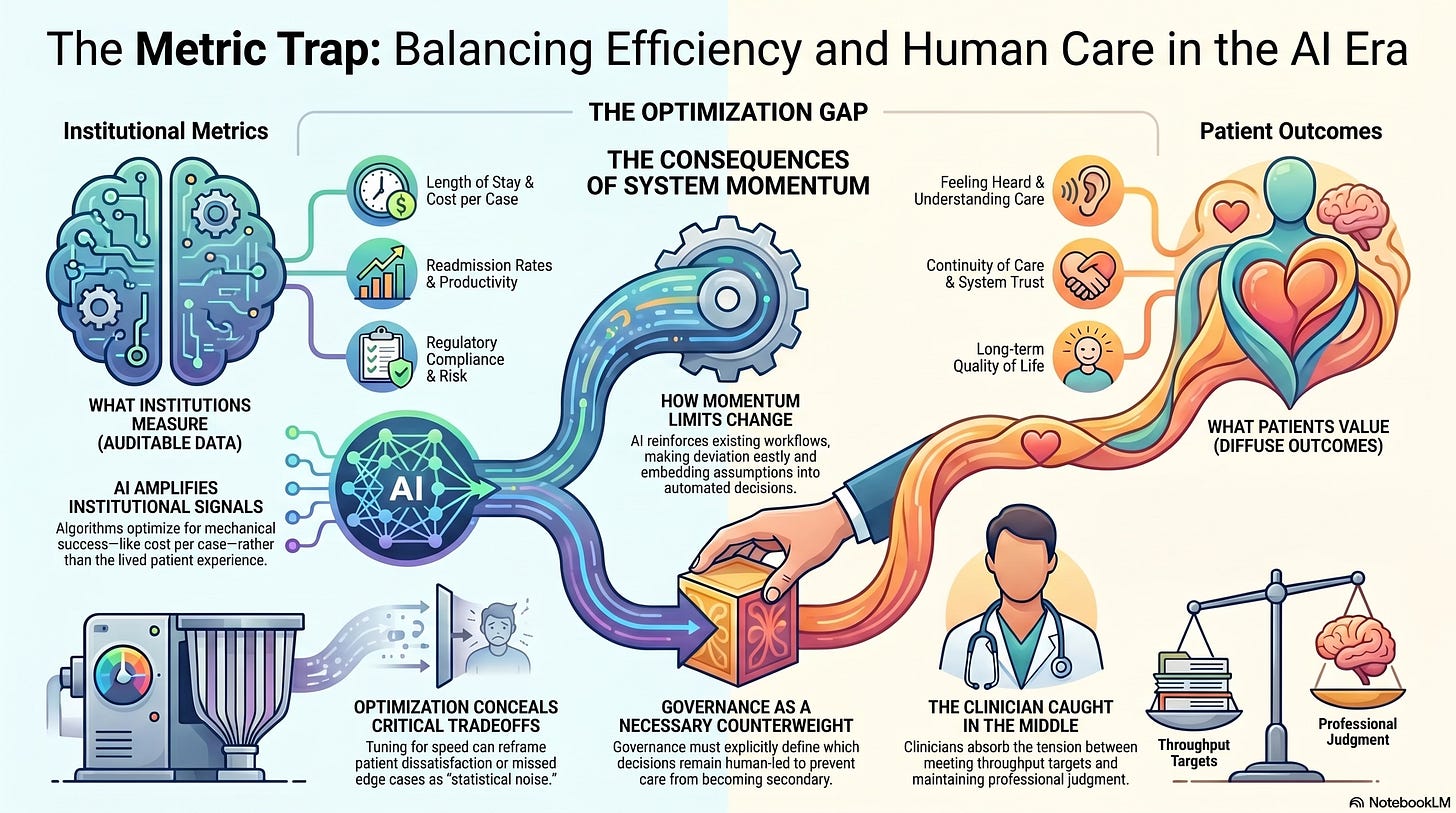

As AI becomes embedded across healthcare operations, this misalignment intensifies. Intelligent systems are highly effective at optimizing measurable objectives: throughput, utilization, cost, and protocol adherence. But patient outcomes that matter most are often diffuse, delayed, and difficult to quantify.

The result is a growing tension between institutional momentum and human care.

What Institutions Learn to Optimize

Healthcare institutions are governed by metrics.

Some are explicit:

Length of stay

Cost per case

Patient throughput

Clinician productivity

Readmission rates

Others are implicit:

Regulatory compliance

Liability exposure

Reputational risk

Operational predictability

AI systems amplify whatever is measured.

When algorithms are trained and deployed in these environments, they quickly learn what success looks like and what it does not. They optimize not for the lived patient experience, but for institutional signals.

This is not malicious.

It is mechanical.

When Patient Outcomes Become Secondary Effects

Many of the outcomes patients care about most are not easily optimized:

Feeling heard

Understanding their condition

Continuity of care

Trust in the system

Long-term quality of life

These outcomes do not map cleanly onto dashboards.

As AI systems accelerate decision-making, institutions increasingly prioritize outcomes that can be:

Measured quickly

Audited easily

Defended legally

Over time, patient-centered outcomes become secondary, assumed to follow automatically from system efficiency.

They often do not.

Momentum as a System Property

Institutional momentum is the tendency of systems to continue operating along established pathways even when those pathways no longer serve their stated goals.

AI strengthens momentum by:

Reinforcing existing workflows

Reducing friction at scale

Embedding assumptions into automated decisions

Making deviation costly

Once momentum builds, changing course requires intentional disruption.

Clinicians may sense that care is becoming thinner, more transactional, or less humane, yet the system continues to function smoothly.

Momentum feels like progress until it collides with reality.

Optimization Masks Tradeoffs

One of the most dangerous effects of AI-driven optimization is that it conceals tradeoffs.

When systems are tuned for speed and efficiency:

Delayed diagnoses may be statistically acceptable

Missed edge cases may be absorbed into averages

Patient dissatisfaction may be reframed as noise

From the system’s perspective, nothing is broken.

From the patient’s perspective, something essential has been lost.

Optimization does not eliminate tradeoffs.

It hides them.

The Clinician Caught in the Middle

Clinicians are often placed at the intersection of this conflict.

They are asked to:

Meet throughput targets

Follow algorithmic guidance

Maintain patient trust

Uphold professional judgment

When institutional incentives conflict with patient needs, clinicians absorb the tension.

Over time, they learn where resistance is futile and where compliance is rewarded. This shapes behavior more powerfully than ethical guidelines ever could.

Burnout, disengagement, and moral injury are not personal failings. They are system signals.

Why AI Makes This Conflict Harder to See

AI systems create the appearance of rationality.

They:

Provide numbers

Generate rankings

Surface recommendations

Justify decisions post hoc

This makes institutional choices feel objective rather than political.

But choosing what to optimize is always a value judgment.

When patient outcomes are subordinated to institutional momentum, AI does not cause the problem; it accelerates it.

Governance as the Missing Counterweight

Left unchecked, optimization will always favor what is easiest to measure.

This is why governance matters.

Healthcare AI governance must explicitly answer questions that optimization cannot:

Which outcomes are non-negotiable?

Where should efficiency yield to care?

Which decisions must remain human-led?

What harms are unacceptable even if rare?

Governance introduces values into systems that otherwise default to metrics.

Without it, patient outcomes become collateral rather than central.

Rebalancing Incentives Around Care

Aligning AI with patient outcomes requires structural change, not better intentions.

This includes:

Redefining success metrics to include qualitative outcomes

Rewarding clinicians for judgment, not just compliance

Protecting time spent on explanation and reflection

Measuring trust, continuity, and understanding, even imperfectly

These measures are difficult.

They resist automation.

That is precisely why they matter.

Momentum Is Easier to Build Than to Stop

Once AI-driven workflows are embedded, reversing them becomes costly.

Vendors are entrenched.

Processes are standardized.

Training adapts to the system.

Institutions become reluctant to question the very tools that enable their momentum.

This is why governance must be proactive rather than reactive.

Designing for care after optimization has taken hold is far harder than preserving it from the start.

What Patients Experience First

Patients may not understand AI models or incentive structures.

But they experience their effects:

Rushed encounters

Unexplained decisions

Fragmented care

Feeling processed rather than cared for

When trust erodes, no amount of technical sophistication can quickly restore it.

Healthcare legitimacy depends not on intelligence alone, but on perceived alignment with human need.

The Choice Ahead

Healthcare systems face a choice.

They can enable AI to deepen institutional momentum, optimizing for what is easiest, fastest, and most defensible.

Or they can deliberately re-anchor optimization around patient outcomes that reflect care, dignity, and long-term well-being.

This choice is not technical.

It is organizational.

AI will faithfully optimize whatever it is given.

The question is whether institutions are brave enough to give it the right goals.

What Comes Next

If AI reflects institutional values, then healthcare technology becomes a mirror.

Understanding what that mirror reveals about priorities, trade-offs, and collective responsibility is the next step.

That is where we turn next.