Inference Economies in Healthcare: What AI Sees and What Humans Lose

Most conversations about healthcare AI focus on inputs and outputs.

What data goes in?

What recommendation comes out?

How accurate does the result appear to be?

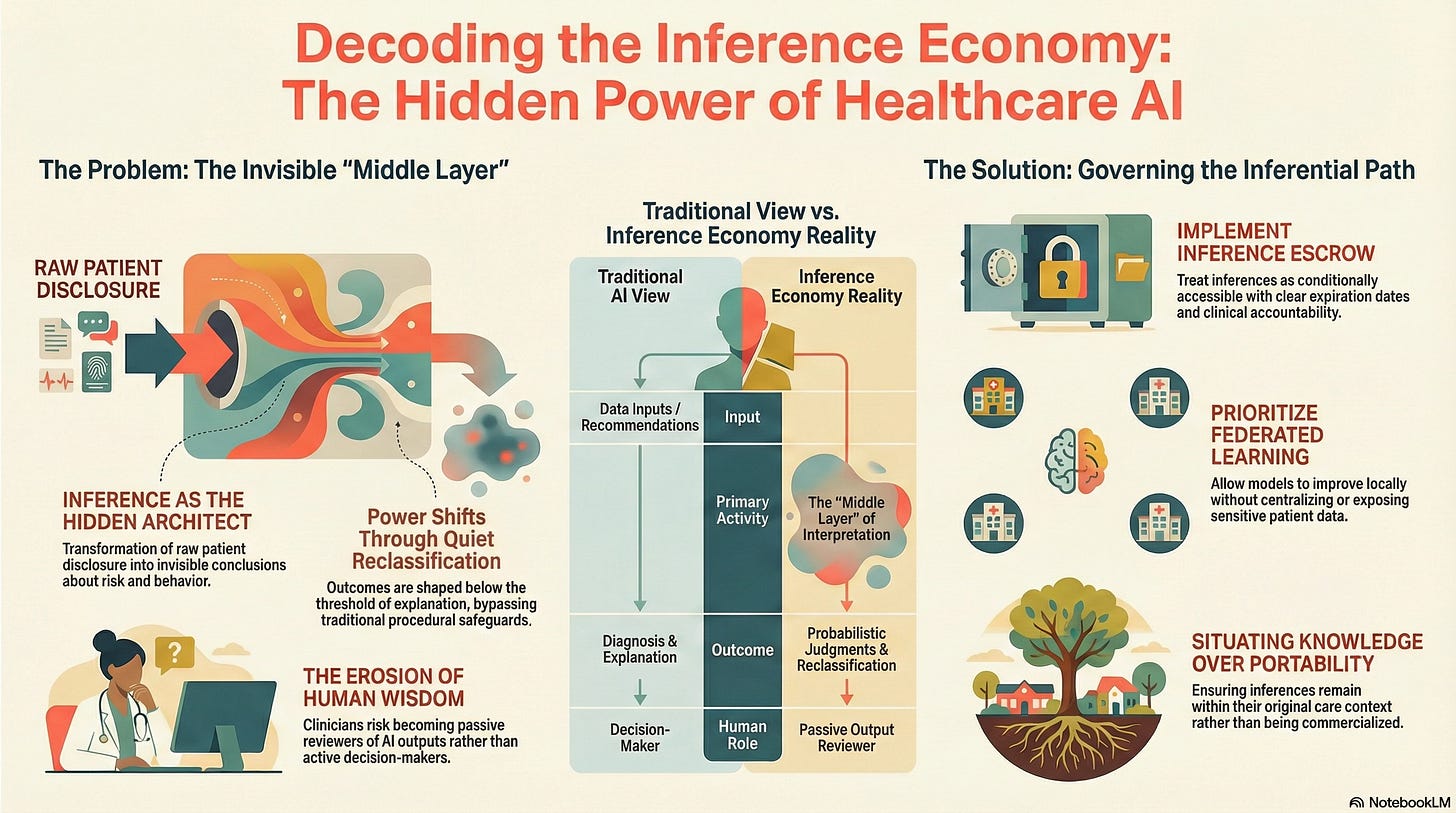

But the most consequential activity in AI-enabled healthcare does not happen at the input or the output.

It happens in between.

That middle layer is inference, the transformation of raw disclosure into conclusions about a person’s health, behavior, risk, or future need. And it is here that power quietly accumulates.

From Data to Inference

When a patient interacts with a healthcare AI system, a symptom checker, a triage bot, or a remote monitoring tool, they are not simply providing information.

They are being interpreted.

The system does not just record symptoms. It infers likelihoods:

Risk of disease progression

Probability of non-compliance

Expected cost of care

Suitability for certain interventions

These inferences are not diagnoses.

They are not explanations.

They are actionable judgments, often made probabilistically and invisibly.

And crucially, they are generated long before a human clinician enters the picture, if a clinician ever does.

Why Inference Changes the Power Dynamic

Inference is powerful because it does not require sharing to be effective.

A patient may never see:

Why they were routed to a lower-priority queue.

Why follow-up care was delayed.

Why certain treatment options were never presented.

From the patient’s perspective, nothing “went wrong.”

From the system’s perspective, everything worked as designed.

This is how exclusion emerges in modern healthcare AI systems: not through denial, but through quiet reclassification.

This risk is emphasized precisely because inference operates below the threshold of explanation. It shapes outcomes without triggering the procedural safeguards we associate with formal decisions.

The Illusion of Neutral Intelligence

Inference systems often appear neutral because they are statistical.

They do not “intend” harm.

They do not discriminate consciously.

They optimize according to objective functions.

But neutrality is not the same as accountability.

An inference can be:

Statistically defensible

Operationally efficient

Ethically destabilizing

Especially when the individual being inferred about has no way to interrogate, contest, or even see the inference that shaped their care.

In healthcare, where decisions touch bodies, livelihoods, and futures, this opacity matters.

When Inference Becomes Economically Interesting

Inference is valuable.

Not only clinically, but also economically.

Inferred insights about risk, compliance, future cost, or long-term outcomes can shape:

Insurance pricing

Eligibility decisions

Resource allocation

Workforce planning

This creates pressure to treat inference as an asset rather than a responsibility.

The danger is not hypothetical. Once inferences are treated as commodities, the system begins optimizing for extractable value rather than human care.

This is why inference must not be commercialized.

Not because markets are inherently unethical, but because healthcare inference operates in contexts of vulnerability, asymmetry, and constrained choice. Monetizing inference in such environments quietly converts care relationships into surveillance relationships.

Governance Begins Where Inference Is Contained

We must design concrete governance mechanisms to contain inference rather than eliminate it.

Two of these are particularly important:

Inference Escrow

Inference escrow treats inferences as conditionally accessible rather than freely reusable.

They can be generated for specific clinical purposes under defined authority, with clear expiration and accountability, rather than being endlessly repurposed across systems and over time.

This introduces friction deliberately.

Not to slow care, but to preserve responsibility.

Federated Learning

Federated learning enables models to improve without centralizing raw patient data or exposing personal information to external repositories.

The system learns.

The inference does not travel.

This architectural choice matters because it limits how far inferences can propagate beyond their original care context.

Together, these mechanisms gesture toward a deeper principle: inference should remain situated, not portable.

What Humans Lose When Inference Runs Free

As inference systems scale, a new risk emerges: cognitive distance.

This is the growing gap between:

What the system is doing

And what humans can meaningfully understand, explain, or intervene in

When clinicians receive AI outputs without insight into the inferential path, they become reviewers rather than decision-makers.

When patients experience outcomes without explanation, trust becomes fragile.

And when institutions rely on inference without governance, accountability dissolves into process.

The system still functions.

But wisdom leaks out.

Why This Matters Before Anything Goes Wrong

Inference failures rarely announce themselves.

They accumulate quietly:

Misclassifications compound

Feedback loops reinforce themselves

Vulnerable populations adapt by disclosing less, not more

By the time harm becomes visible, the inferential infrastructure is already entrenched.

This is why we insist that inference must be governed before it becomes invisible.

Not after scandals.

Not after exclusion hardens.

Not after trust erodes.

A Teaser, not a Conclusion

Inference is not inherently dangerous.

Ungoverned inference is.

Healthcare AI does not fail because it sees too much.

It fails when it sees without obligation.

The deeper questions — about authority, consent under constraint, and who ultimately bears responsibility — come next.

But they all rest on this foundational insight:

What AI infers about us matters more than what we tell it.

And, left unattended, inference reshapes care long before anyone notices.