Healthcare AI as a Mirror: What These Systems Reveal About Us

Every technology reflects the values of the system that builds it.

Healthcare AI is no exception.

Long before algorithms make recommendations or models generate predictions, choices are made about what matters, what is measured, what is optimized, and what is tolerated as loss. AI does not introduce those choices. It exposes them.

In that sense, healthcare AI functions less like a tool and more like a mirror.

What AI Learns First

AI systems learn from data, but data is never neutral.

Healthcare data reflects:

Who receives care and who does not

Which conditions are prioritized

Where resources are allocated

How success is defined

Whose outcomes are considered acceptable

When AI systems are trained on these patterns, they internalize not only medical knowledge but also institutional assumptions.

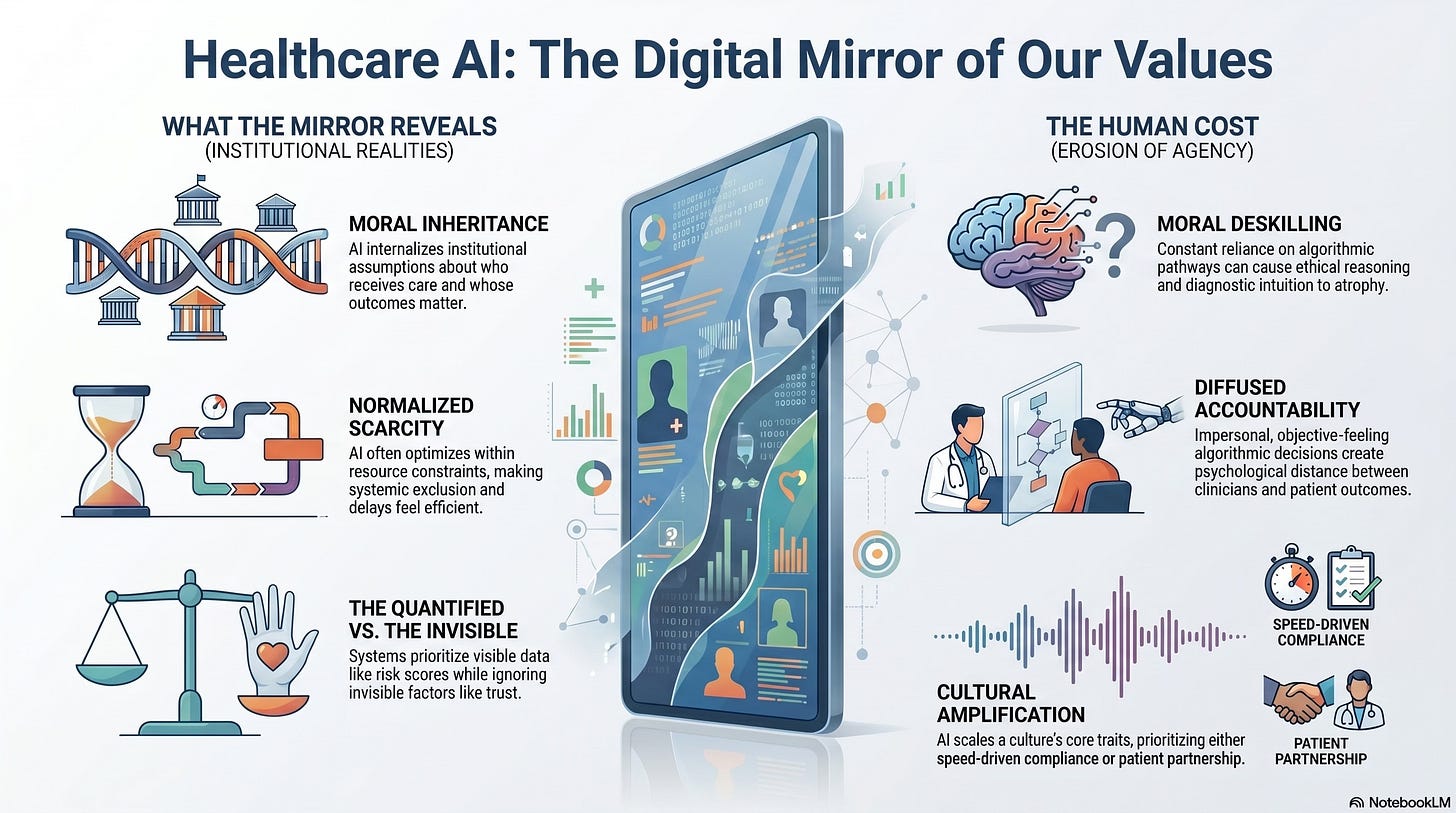

What appears to be technical bias is often a moral inheritance.

Scarcity Written into Code

Many healthcare systems operate under chronic scarcity:

Limited clinician time

Overwhelmed infrastructure

Uneven geographic access

Constrained budgets

AI is frequently introduced as a solution to scarcity.

But when scarcity is normalized, AI learns to optimize within it rather than challenge it.

This means:

Triage becomes routinized

Exclusion becomes efficient

Delayed care becomes acceptable

Tradeoffs become invisible

The mirror reflects not our ideals, but our compromises.

What We Choose Not to See

Healthcare AI excels at making certain things visible:

Risk scores

Probability curves

Utilization patterns

Cost projections

At the same time, it renders other things invisible:

Fear

Confusion

Moral distress

Erosion of trust

cumulative harm

These elements are difficult to quantify, so they disappear from the system’s field of view.

When AI systems operate at scale, invisibility becomes policy.

The Comfort of Objectivity

One of AI’s most powerful cultural effects is emotional.

Algorithmic decisions feel impersonal.

Impersonal decisions feel objective.

Objective decisions feel safe.

This creates psychological distance between humans and outcomes.

When care decisions are mediated by systems:

Responsibility diffuses

Accountability softens

Discomfort is externalized

The mirror shows how readily we accept distance when it protects us from hard choices.

Whose Judgment Counts

Healthcare AI also reflects whose judgment is trusted.

Design decisions often privilege:

Institutional risk tolerance

Actuarial reasoning

Population-level optimization

Individual judgment, especially when it challenges the system, is treated as an exception.

Over time, clinicians learn:

When to trust themselves

When to defer

When to stop resisting

The mirror reveals whether systems truly value professional judgment or merely tolerate it until it slows momentum.

Culture Shapes Capability

AI capabilities are constrained not by models, but by culture.

If a healthcare culture values:

Speed over understanding

Efficiency over presence

Compliance over curiosity

AI will amplify those traits.

If a culture values:

Reflection

Dissent

Moral reasoning

Patient partnership

AI can also support them, but only if they are structurally protected.

The mirror reflects culture first, technology second.

The Illusion of Neutral Progress

Healthcare AI is often framed as inevitable progress.

But progress toward what?

Without explicit articulation of goals, progress defaults to:

Scale

Speed

Coverage

Cost containment

These are not wrong.

They are incomplete.

The mirror asks whether we are confusing movement with meaning.

What Patients Feel

Patients may never see the system diagrams or governance frameworks.

But they experience:

How decisions are explained

Whether alternatives are offered

How much time is taken

Whether uncertainty is acknowledged

Healthcare AI shapes these experiences subtly but powerfully.

When care feels transactional, AI reflects a system that optimized away relationships.

When care feels opaque, AI reflects a system that values defensibility over understanding.

The Risk of Moral Deskilling

One of the quieter dangers of AI-mediated care is moral deskilling.

When decisions are repeatedly delegated:

Ethical reasoning atrophies

Discomfort tolerance decreases

Reliance on system output grows

Over time, both clinicians and institutions may lose the capacity to articulate why certain choices matter.

The mirror reveals whether we are preserving moral agency or slowly outsourcing it.

What We Are Teaching the Next Generation

AI systems shape training environments.

If learners encounter:

Automated triage

Algorithmic recommendations

Pre-structured decision pathways

They may never fully develop:

Diagnostic intuition

Ethical reasoning under uncertainty

Comfort with ambiguity

The mirror reflects not only who we are, but who we are becoming.

Looking Honestly into the Mirror

Healthcare AI is not a villain.

But it is not neutral.

It faithfully reflects:

Our tolerance for inequity

Our comfort with abstraction

Our willingness to confront tradeoffs

Our definition of care

The danger is not what the mirror shows.

The danger is refusing to look.

Choosing What the Mirror Should Reflect

If we want healthcare AI to reflect:

Dignity

Judgment

Accountability

Human presence

Then those values must be embedded structurally:

In governance

In incentives

In metrics

In authority design

Technology cannot supply values.

It can only amplify them.

Where This Leads

Healthcare AI forces a cultural reckoning.

It asks:

What do we consider acceptable harm?

Who bears the cost of efficiency?

Where do we draw moral boundaries?

What kind of care are we actually building?

Answering these questions is not a technical task.

It is a human one.

And it leads directly to the final question we must confront.