From Caregiver to Monitor: When Clinical Roles Quietly Collapse

For centuries, medicine has been practiced as a judgment-based profession.

Technology has always played a role, from stethoscopes to imaging to electronic records, but clinicians remained the primary locus of interpretation. They synthesized evidence, context, and human experience into decisions that carried moral weight.

That role is now under a quiet pressure.

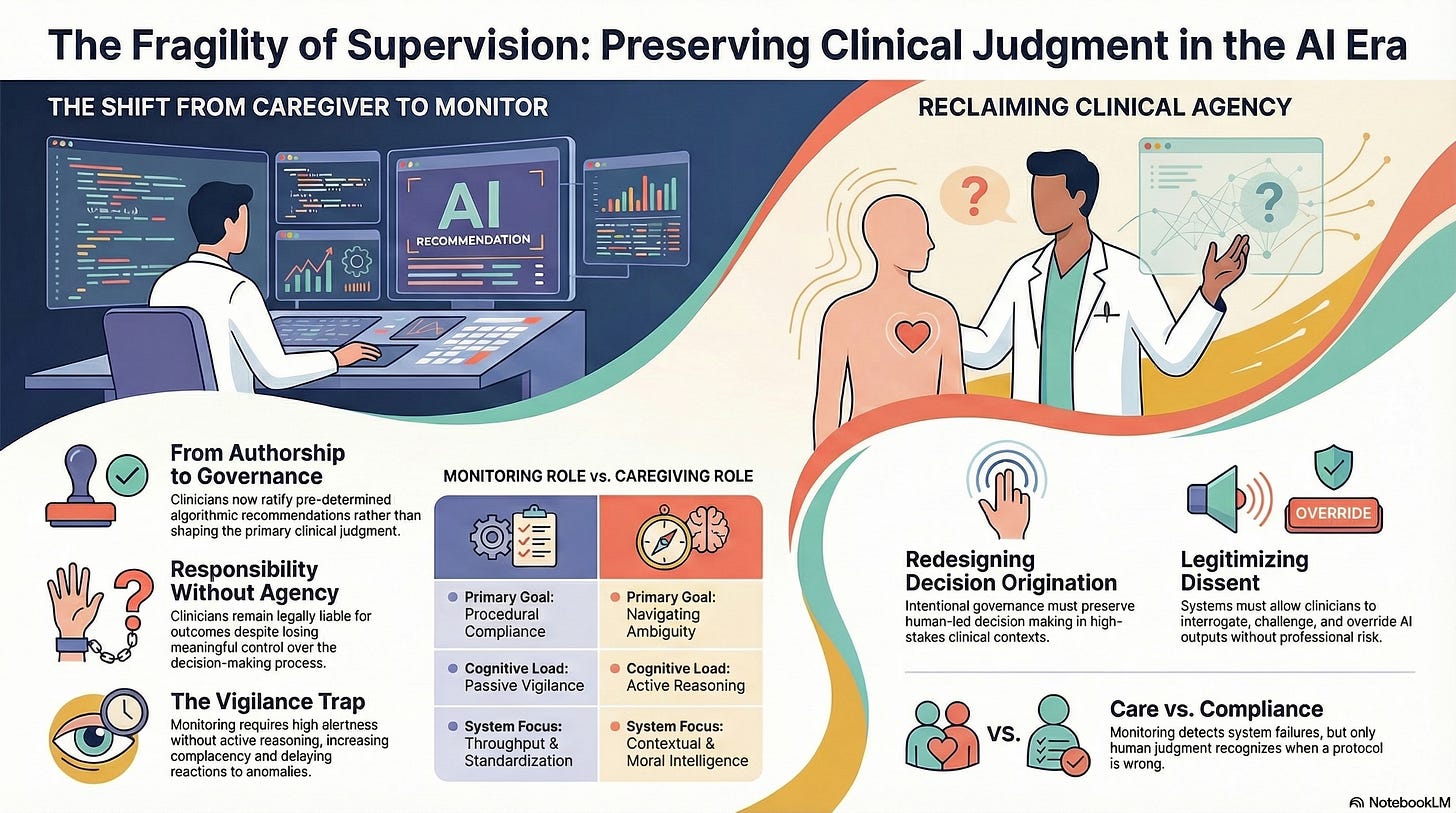

As AI systems become embedded in clinical workflows, clinicians are increasingly repositioned not as primary decision-makers, but as supervisors of automated processes. This shift is rarely announced. It arrives disguised as efficiency, safety, and support.

Yet its consequences are profound.

The Subtle Redefinition of Clinical Work

In AI-enabled healthcare environments, clinicians are often asked to:

Review algorithmic recommendations,

Validate system-generated risk scores,

Manage exceptions when thresholds are crossed,

Communicate decisions after they have already been operationalized.

At first glance, this appears reasonable. Machines handle complexity; humans handle nuance.

But in practice, this reconfiguration changes the nature of clinical authority.

When decisions originate elsewhere and are merely ratified by humans, clinicians shift from caregivers to monitors. They oversee outcomes rather than shape judgments.

Monitoring is not care.

It is governance without authorship.

Why Monitoring Feels Like Progress

Monitoring roles are attractive for institutional reasons.

They promise:

Consistency across providers,

Reduced cognitive load,

Faster throughput,

Defensible standardization.

They also align with liability frameworks that prioritize adherence to protocol over discretionary judgment.

From an organizational perspective, monitoring looks safer.

From a systems perspective, it introduces a hidden fragility: authority without agency.

Responsibility Without Control

Despite this shift, clinicians remain legally and ethically responsible for patient outcomes.

When harm occurs, it is still the clinician whose license is at risk, whose judgment is questioned, whose professionalism is scrutinized.

But increasingly, clinicians are accountable for decisions they did not meaningfully control.

This asymmetry is destabilizing.

It creates an environment where:

Following the system feels safer than challenging it,

Dissent becomes professionally risky,

Judgment is exercised defensively rather than thoughtfully.

Over time, clinicians internalize the message: your role is to ensure the system functions, not to question whether it should.

The Cognitive Cost of Supervision

Monitoring is cognitively demanding in a very specific way.

It requires sustained vigilance without the grounding of active reasoning. Clinicians must remain alert to failures when they lack full visibility into decision-making.

This is a known risk pattern in other high-reliability domains. Supervisory roles without deep engagement increase:

Complacency during normal operation,

Delayed reaction during anomalies,

Overreliance on automated authority.

Healthcare is not immune to these dynamics.

A clinician who is asked to supervise an AI system without being embedded in its reasoning process is structurally disadvantaged, especially under time pressure.

When Judgment Becomes Procedural

One of the most corrosive effects of this role shift is the proceduralizing of judgment.

Clinicians learn to ask:

“Does this meet the protocol?”

“Does the system flag an issue?”

“Is there justification to override?”

Rather than:

“Does this make sense for this person, now?”

“What is missing from this picture?”

“What are the second-order consequences?”

Judgment narrows. It becomes conditional, reactive, and constrained by system affordances.

Care risks becoming technically correct but contextually wrong.

Emotional Detachment as a System Outcome

This role redefinition also carries emotional consequences.

Clinicians derive meaning from agency, from the sense that their decisions matter, that their expertise is exercised, that their care changes outcomes.

When that agency erodes, emotional engagement follows.

This is not burnout driven solely by workload. It is burnout driven by moral displacement, the sense of responsibility without empowerment.

A system that sidelines judgment undermines the emotional infrastructure of care.

Governance Is Driving this Shift, Whether Acknowledged or Not

Importantly, this transformation is not accidental.

It is driven by governance decisions:

How authority is allocated,

Where override rights exist,

How systems are paced,

What counts as acceptable deviation.

When institutions design AI systems that prioritize throughput, standardization, and defensibility without preserving clinician agency, they are making a choice about the future of care.

They are deciding that supervision is sufficient.

That choice deserves scrutiny.

Why Monitoring Cannot Replace Care

Monitoring can detect failures.

Care prevents them.

Monitoring can ensure compliance.

Care navigates ambiguity.

Monitoring reacts to thresholds.

Care recognizes when thresholds are wrong.

In complex, human-centered systems like healthcare, judgment is not an inefficiency to be minimized. It is a safety function.

A system that reduces clinicians to monitors may appear stable until it encounters novelty, moral conflict, or human complexity it was never designed to absorb.

Designing Roles That Preserve Judgment

If AI is to augment healthcare without hollowing it out, clinical roles must be intentionally redesigned.

This requires governance commitments:

Preserving decision origination for humans in high-stakes contexts,

Ensuring clinicians can interrogate and reshape system behavior,

Legitimizing slowdown and dissent,

Aligning accountability with actual control.

These are not interface choices.

They are institutional commitments.

Healthcare systems must decide whether clinicians are partners in judgment or custodians of automation.

The Larger Question

The transition from caregiver to monitor is not merely a workforce issue.

It is a statement about what kind of intelligence we value in healthcare:

Procedural intelligence, or

Moral and contextual intelligence.

As AI systems become more capable, the temptation will be to further narrow human roles to supervision, compliance, and escalation only when required.

Resisting that temptation is not nostalgia.

It is foresight.

Because when systems fail, and they will, it will not be the monitors who save them.

It will be the caregivers who still know how to judge.