Desperation as Input: When Need Becomes Data

Artificial intelligence systems are often described as data-hungry.

More data, we are told, leads to better models, better predictions, better outcomes.

This framing hides a crucial truth in healthcare:

Much of the data AI systems consume is not generated under neutral conditions.

It is generated under constraint, urgency, and desperation.

When unmet need becomes the primary driver of interaction, data takes on a different meaning.

Data Is Never Just Data

In healthcare, data is produced by people at moments of vulnerability:

When they are in pain,

When they are afraid,

When they lack access to timely care.

Symptoms are described not as observations, but as pleas.

Histories are compressed, distorted, or exaggerated by urgency.

Context is lost because there is neither time nor a listener.

This is not dishonesty.

It is adaptive behavior under stress.

AI systems do not experience this stress.

But they are trained on its artifacts.

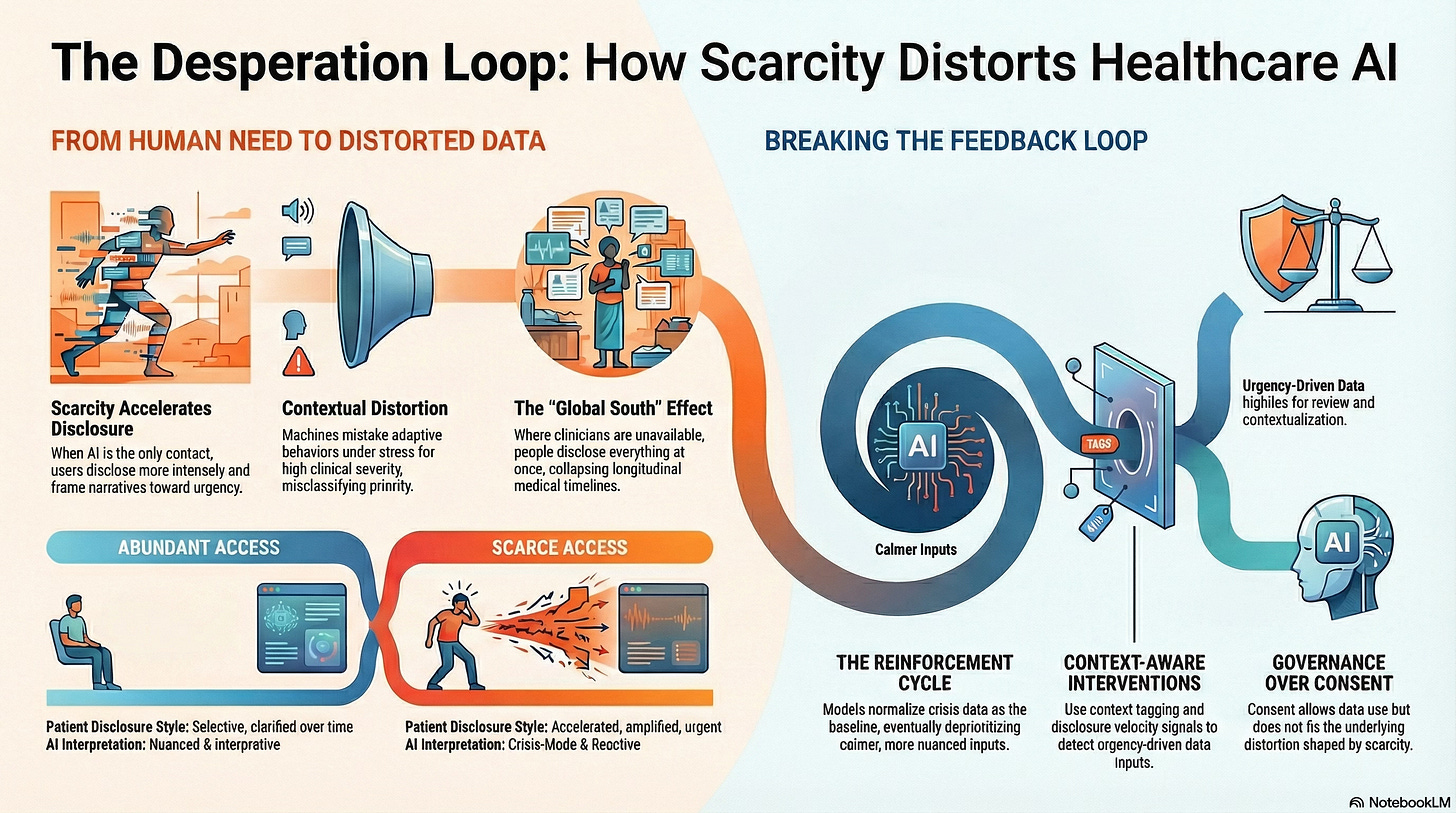

Scarcity Changes What People Disclose

When access to care is abundant, patients disclose selectively.

They wait for appointments.

They clarify symptoms over time.

They correct misunderstandings.

When access is scarce, disclosure accelerates.

Research across digital health platforms shows that when AI-mediated tools are the only point of contact:

Users disclose more intensely,

Describe symptoms more broadly,

And frame narratives toward urgency.

In mental health platforms, for example, studies show higher disclosure of suicidal ideation and distress in chatbot interactions than in in-person settings, not necessarily because the AI is trusted more, but because it is available.

Availability reshapes honesty.

Desperation Is a Context, Not a Feature

AI systems typically treat inputs as signals.

They assume that what is expressed reflects the internal state.

But desperation alters expression.

When people need attention, they:

Amplify symptoms,

Collapse timelines,

And foreground worst-case scenarios.

From a human perspective, this is understandable.

From a machine perspective, it is indistinguishable from high severity.

The system does what it is designed to do:

Escalate,

Classify,

Prioritize.

However, it does so without awareness of why the data appear as they do.

The Feedback Loop Nobody Designed

Here is where the risk compounds.

Scarcity limits access to human care

People turn to AI-mediated systems

Desperation intensifies disclosure

Models interpret this as higher prevalence or severity

Systems adjust thresholds and outputs accordingly

Over time, desperation becomes normalized as baseline data.

This is not biased in the conventional sense.

It is contextual distortion.

Once embedded, it is hard to detect because outcomes appear aligned with expressed needs.

When Vulnerability Becomes Training Data

In healthcare, vulnerability is unavoidable.

But turning vulnerability into unexamined training data is a governance failure.

AI systems trained on desperation risk:

Overestimating prevalence,

Misclassifying urgency,

And reinforcing crisis-oriented interactions.

More subtly, they begin to expect distress.

This reshapes system behavior:

Calmer inputs are deprioritized,

Nuanced narratives are lost,

And care becomes reactive rather than interpretive.

The system learns urgency rather than health.

Global South Dynamics Intensify the Risk

In the Global South, where AI tools may be the only accessible interface, this effect is amplified.

When:

Clinicians are unavailable,

Diagnostic tools are scarce,

And follow-up is uncertain,

People disclose everything at once.

There is no second chance.

AI systems trained on such data inherit:

Collapsed timelines,

Absent baselines,

And unresolved uncertainty.

What appears to be rich data is often a compressed reality.

Without governance, these systems risk mistaking desperation for epidemiology.

Consent Does Not Correct This

Even when consent is obtained, the problem remains.

People may agree to data use, but they do not consent to:

Their vulnerability being normalized,

Their urgency becoming a training signal,

Or their lack of alternatives shaping system behavior.

Consent governs permission.

It does not govern contextual distortion.

Treating consent as sufficient protection ignores how scarcity shapes data before consent ever occurs.

Why This Is a Governance Problem

This is not a technical flaw.

It is a systems design failure.

Governance must address:

How data is generated,

Under what conditions,

And with what alternatives available?

Key governance questions include:

Should data collected under access scarcity be weighted differently?

How do systems detect desperation-driven disclosure?

What safeguards prevent crisis-mode data from redefining normality?

These questions rarely appear in AI governance frameworks.

Yet they determine system behavior at scale.

Designing for Context-Aware Data Use

There are concrete design interventions available:

Context tagging of data collected under constrained access

Disclosure velocity signals to detect urgency-driven input

Adaptive thresholds that account for scarcity conditions

Human review triggers when data suggests systemic distress rather than individual pathology

Decoupling training data from frontline crisis interactions

These mechanisms do not reduce access.

They prevent distortion.

A Cognitive Age Insight

The Cognitive Age is not just about more data.

It is about knowing when data is shaped by need rather than truth.

Healthcare AI systems that cannot distinguish desperation from diagnosis risk building intelligence on suffering rather than understanding.

That does not make them immoral.

It makes them ungoverned.

If we want AI to support human judgment, we must first acknowledge that data carries the emotional and structural fingerprints of the systems that generate it.

Desperation is not noise.

But it is not the truth either.

And governing that difference is one of the defining challenges of healthcare in the Cognitive Age.