Designing for Reflection: How Healthcare Systems Can Slow Down Without Failing

Modern healthcare systems are built to move.

Patients flow through triage.

Decisions cascade across departments.

Resources are allocated under constant pressure.

AI accelerates all of this. It compresses time between signal and action, promising earlier detection, faster intervention, and greater efficiency.

But not all healthcare decisions should move at machine speed.

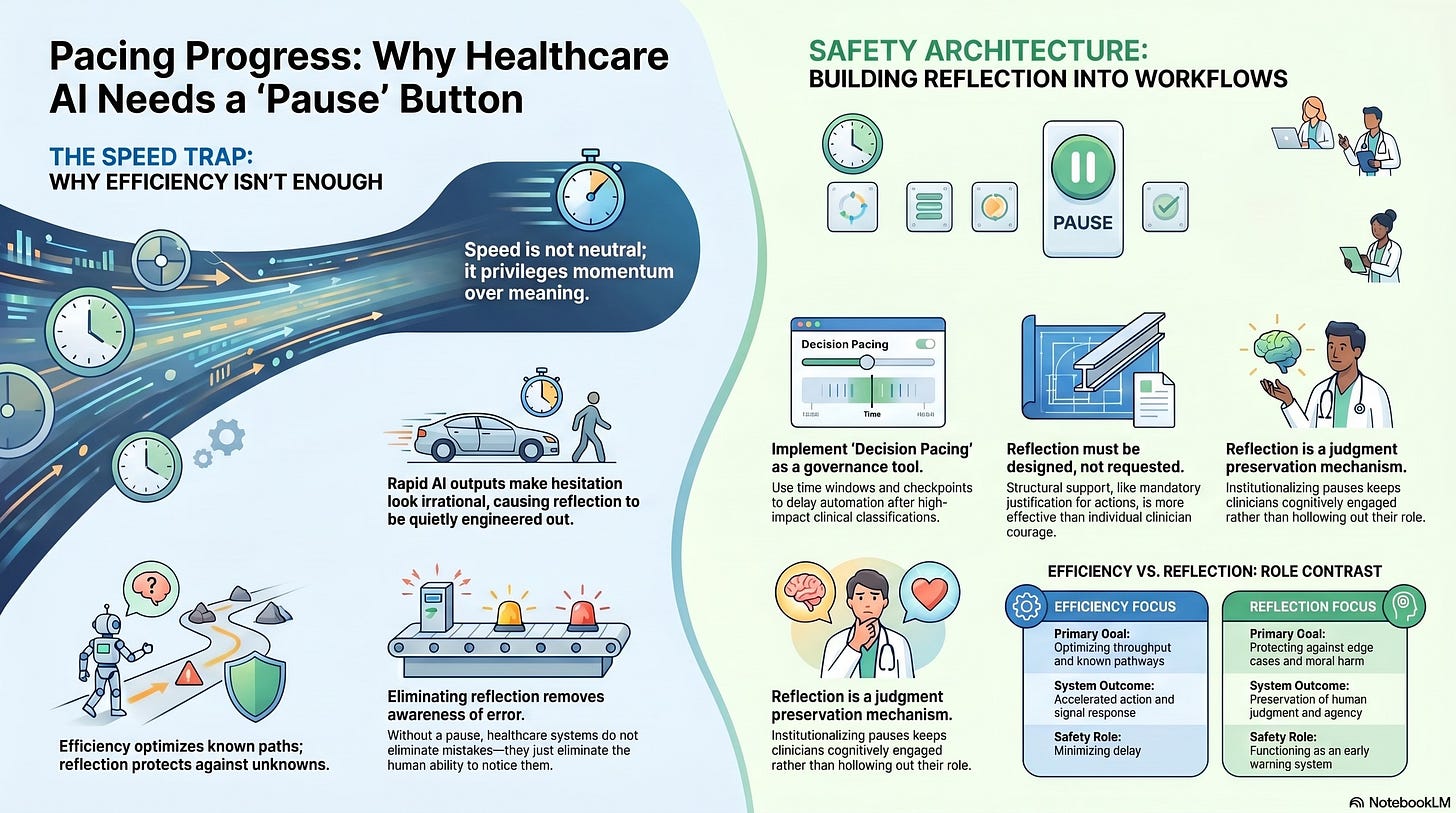

Some require reflection, not as a personal virtue, but as a system property. Designing for reflection is one of the hardest governance challenges of the AI era, precisely because it appears to conflict with everything institutions are incentivized to optimize.

Why Reflection Disappears First

Reflection is fragile under pressure.

In overloaded healthcare environments, slowing down is often equated with failure:

Longer wait times

Reduced throughput

Missed targets

Increased cost

AI systems amplify this dynamic by making acceleration feel safe. When models produce confident outputs backed by data, hesitation looks irrational.

As a result, reflection is quietly engineered out, not through explicit prohibition, but through workflow design.

If a system does not create space for reflection, it will not happen.

Reflection Is Not the Opposite of Efficiency

One of the most persistent misconceptions in healthcare is that reflection and efficiency are opposing forces.

They are not.

Reflection prevents certain classes of failure that efficiency cannot detect:

Misclassification of edge cases

Moral harm invisible to metrics

Cascading errors triggered by early assumptions

Loss of trust that undermines long-term outcomes

Efficiency optimizes known pathways.

Reflection protects against the unknown ones.

In complex systems, eliminating reflection increases fragility, even as performance metrics improve.

Where Reflection Actually Matters

Not every decision needs reflection. Many should be fast.

The governance challenge is to identify which moments require a pause.

These often include:

Decisions that irreversibly alter care pathways

Classifications that affect eligibility or access

Situations involving conflicting values or tradeoffs

Contexts of patient distress or constrained choice

Novel cases where historical data is weak

In these moments, speed is not neutral. It privileges momentum over meaning.

Reflection is not about slowing everything down.

It is about slowing down the right things.

Reflection Must Be Designed, Not Requested

Healthcare systems often rely on individual clinicians to “speak up” when something feels wrong.

This is insufficient.

Reflection cannot depend on courage alone. It must be structurally supported.

Designing for reflection means:

Embedding pause points into workflows

Requiring human justification for certain actions

Allowing decisions to be temporarily reversible

Signaling that slowdown is legitimate, not deviant

If reflection is optional, it will be overridden by urgency.

If it is required, it becomes part of normal operation.

Decision Pacing as a Governance Tool

One of the most underused governance levers in AI-enabled systems is decision pacing.

Decision pacing controls how quickly actions propagate once a threshold is crossed.

In healthcare AI, this might mean:

Delaying downstream automation after high-impact classifications

Staging decisions across multiple checkpoints

Creating time windows for human review before execution

Preventing instantaneous system-wide updates based on a single inference

These mechanisms do not block care.

They protect it from premature certainty.

Pacing acknowledges a basic truth: some errors are far more costly than delay.

Reflection Protects Human Judgment

Reflection is also how human judgment remains viable under acceleration.

When systems move too fast:

Clinicians stop reasoning

Oversight becomes procedural

Overrides become rare and risky

By contrast, systems that institutionalize reflection:

Keep humans cognitively engaged

Legitimize questioning

Preserve moral agency

Reflection is not a slowdown tax.

It is a judgment preservation mechanism.

Without it, humans remain present but hollowed out.

The Emotional Dimension of Reflection

Reflection is not purely cognitive. It is emotional.

It allows clinicians to:

Process uncertainty

Regulate stress

Recognize moral discomfort

Notice when efficiency conflicts with care

AI systems that suppress reflection also suppress emotional signals, the very signals that often precede recognition of harm.

In this sense, reflection functions as an early warning system.

When healthcare systems eliminate reflection, they do not eliminate error. They eliminate awareness of error.

Institutional Resistance to Reflection

Designing for reflection is difficult because it challenges institutional habits.

It forces organizations to confront uncomfortable questions:

Where are we willing to accept delay?

Who has the authority to pause the system?

What decisions should never be fully automated?

Which outcomes matter more than throughput?

These are governance questions, not technical ones.

They cannot be answered by optimization alone.

Reflection as a Safety Architecture

In high-reliability domains, safety is achieved not by eliminating friction, but by strategically placing it.

Healthcare AI requires a similar shift.

Reflection should be treated as:

A safety architecture

A governance feature

A marker of institutional maturity

Systems that can slow themselves deliberately are more resilient than those that cannot.

Speed without brakes is not progress.

It is deferred failure.

What Designing for Reflection Signals

When a healthcare system embeds reflection, it sends a signal:

That judgment is valued

That care is more than throughput

That humans are not there to rubber-stamp machines

This signal shapes behavior, culture, and trust.

Patients notice when decisions feel rushed.

Clinicians notice when questioning is unwelcome.

Reflection restores credibility in environments where automation threatens to erode it.

The Larger Stakes

As AI becomes more capable, the temptation will be to remove pauses entirely and to treat reflection as an inefficiency that technology has rendered obsolete.

That temptation must be resisted.

Healthcare does not fail because it lacks speed.

It fails when speed outruns sense-making.

Designing for reflection is how institutions remain humane under acceleration.

What Comes Next

If reflection can be designed into systems, the next question is whether institutions are willing to realign their incentives around it.

Because slowing down in the right places often conflicts with momentum elsewhere.

Understanding that tension and deciding whose outcomes ultimately matter is where we turn next.