Cognitive Distance in Healthcare: Why Clinicians Must Stay Close

Healthcare has always depended on proximity.

Not just physical proximity to patients, but cognitive and emotional proximity to decisions: understanding why a choice is being made, sensing when something feels off, and recognizing when rules no longer fit reality.

As AI systems become embedded in healthcare workflows, a new and underappreciated risk emerges: cognitive distance, the growing gap between what systems do and what humans can meaningfully understand, question, or influence.

This distance is not a side effect. It is a structural consequence of acceleration.

What Cognitive Distance Looks Like in Practice

Cognitive distance appears when a clinician is asked to act on a recommendation they cannot fully explain.

The system flags a patient as low priority.

A risk score downgrades urgency.

An automated triage pathway reroutes care.

Nothing is obviously wrong.

The numbers look reasonable.

The model is statistically sound.

However, the clinician no longer has a clear mental model of why this patient was classified this way, only that the system arrived at this classification.

At that point, the clinician is no longer exercising judgment.

They are supervising outcomes.

Why Interpretability Alone Is Not Enough

Much attention has been given to explainable AI: surfacing which variables influenced a decision, highlighting correlations, or visualizing model weights.

These tools are useful, but they do not resolve the deeper problem.

Understanding how a model works is not the same as understanding whether its output makes sense in context.

Healthcare decisions are not purely analytical. They rely on:

Tacit knowledge

Embodied experience

Awareness of social and emotional cues

Sensitivity to uncertainty

A technically interpretable system can still outrun a human’s ability to feel when something is wrong.

Cognitive distance is not a failure of transparency.

It is a failure of alignment between system tempo and human sense-making.

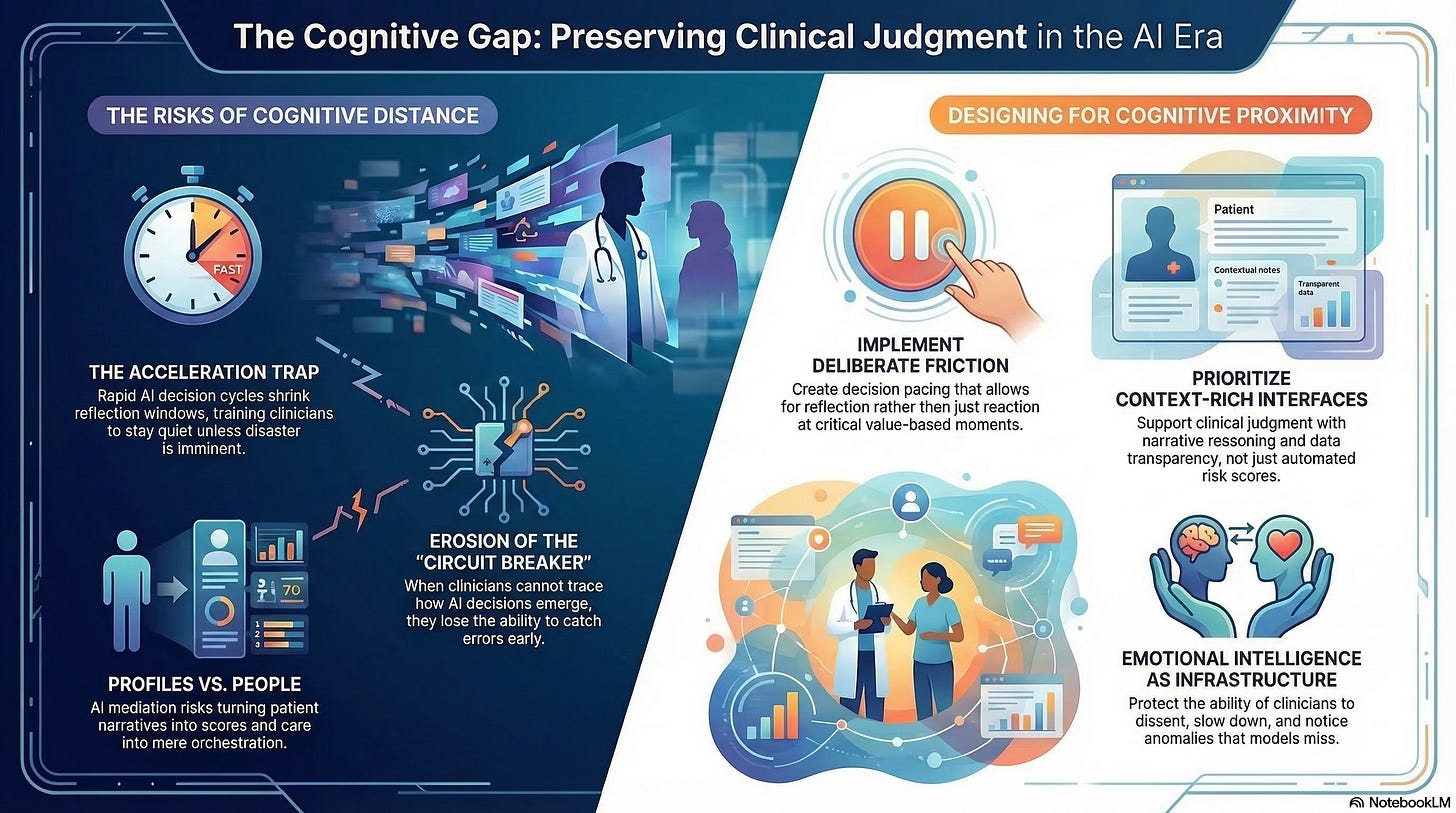

The Acceleration Trap

AI systems promise speed. In healthcare, speed is often framed as safety: faster triage, quicker diagnoses, and earlier intervention.

But speed has a cost.

As decision cycles compress:

Reflection windows shrink

Escalation thresholds rise

Hesitation becomes deviation

Clinicians adapt by trusting the system unless there is overwhelming evidence to intervene. Over time, this becomes habitual.

The system does not remove clinicians from the loop.

It trains them to stay quiet unless disaster is imminent.

This is how cognitive distance becomes normalized.

When Distance Undermines Empathy

Empathy requires proximity.

It depends on being close enough to:

Understand patient narratives

Notice inconsistencies

Recognize when data does not capture lived reality

When AI mediates a greater share of the clinical encounter through pre-filtered information, summarized histories, and automated recommendations, clinicians increasingly interact with representations rather than people.

Patients become profiles.

Conditions become scores.

Care becomes orchestration.

The risk is not that clinicians stop caring.

It is that the system makes caring harder to operationalize.

A system that accelerates beyond empathy does not feel cruel.

It feels efficient.

Why Distance Is a Safety Risk

Cognitive distance erodes safety long before it produces obvious harm.

When clinicians cannot:

Trace how a decision emerged

Articulate why an alternative was rejected

Confidently override the system

They lose the ability to act as circuit breakers.

Errors are not caught early.

Edge cases are missed.

Responsibility diffuses.

By the time a failure becomes visible, the causal chain is too complex to reconstruct. Accountability becomes procedural rather than moral.

This is why safety in AI-enabled healthcare cannot be reduced to accuracy metrics alone. A system can be statistically reliable and operationally unsafe if humans are cognitively sidelined.

Staying Close Requires Structural Design

Cognitive proximity does not happen by goodwill. It must be designed.

Healthcare systems that preserve human judgment under acceleration share several characteristics:

Decision pacing that allows reflection, not just reaction

Clear override authority, exercised without penalty

Context-rich interfaces that support narrative reasoning, not just scores

Deliberate friction at moments where values, not efficiency, are at stake

These are not usability features.

They are governance choices.

They signal that human sense-making is not an inconvenience to be minimized, but a capability to be protected.

Emotional Intelligence as Infrastructure

In high-velocity environments, emotional intelligence becomes a form of infrastructure.

Leaders who:

Notice cognitive overload

Legitimize slowing down

Protect dissent under pressure

Are not being cautious. They are preserving system integrity.

Clinicians who remain emotionally engaged are more likely to notice anomalies, challenge recommendations, and advocate for patients who do not fit the model.

Distance dulls responsibility.

Engagement sustains it.

The Hidden Cost of Delegation

Delegating decisions to machines feels rational when systems perform well.

But delegation reshapes skill over time.

As clinicians are relieved of certain judgments, the opportunity to develop and maintain those judgments erodes. What begins as assistance becomes dependency.

Eventually, staying close is no longer possible, not because humans are excluded, but because the cognitive muscles required have atrophied.

This is not a future risk. It is already visible in highly automated environments.

Why Staying Close Is the Hard Part

Cognitive distance grows quietly because it aligns with institutional incentives:

Efficiency

Throughput

Standardization

Scalability

Staying close feels expensive.

It takes time.

It resists automation.

It complicates metrics.

But healthcare has never been purely an optimization problem.

It is a moral practice operating under constraint.

What Comes Next

If cognitive distance widens unchecked, the clinician’s role shifts fundamentally from judgment holder to system monitor.

Understanding the transition and deciding whether it is acceptable requires confronting how automation reshapes professional identity and responsibility.

That is where we turn next.