Accountability Without Illusion: Who Is Responsible When Healthcare AI Fails?

When something goes wrong in healthcare, responsibility has traditionally been clear.

A clinician made a decision.

An institution set a policy.

A regulator defined a standard.

AI complicates this clarity, not because it introduces ambiguity, but because it redistributes action across systems faster than responsibility can follow.

The result is a dangerous illusion: that accountability still exists simply because humans remain somewhere in the process.

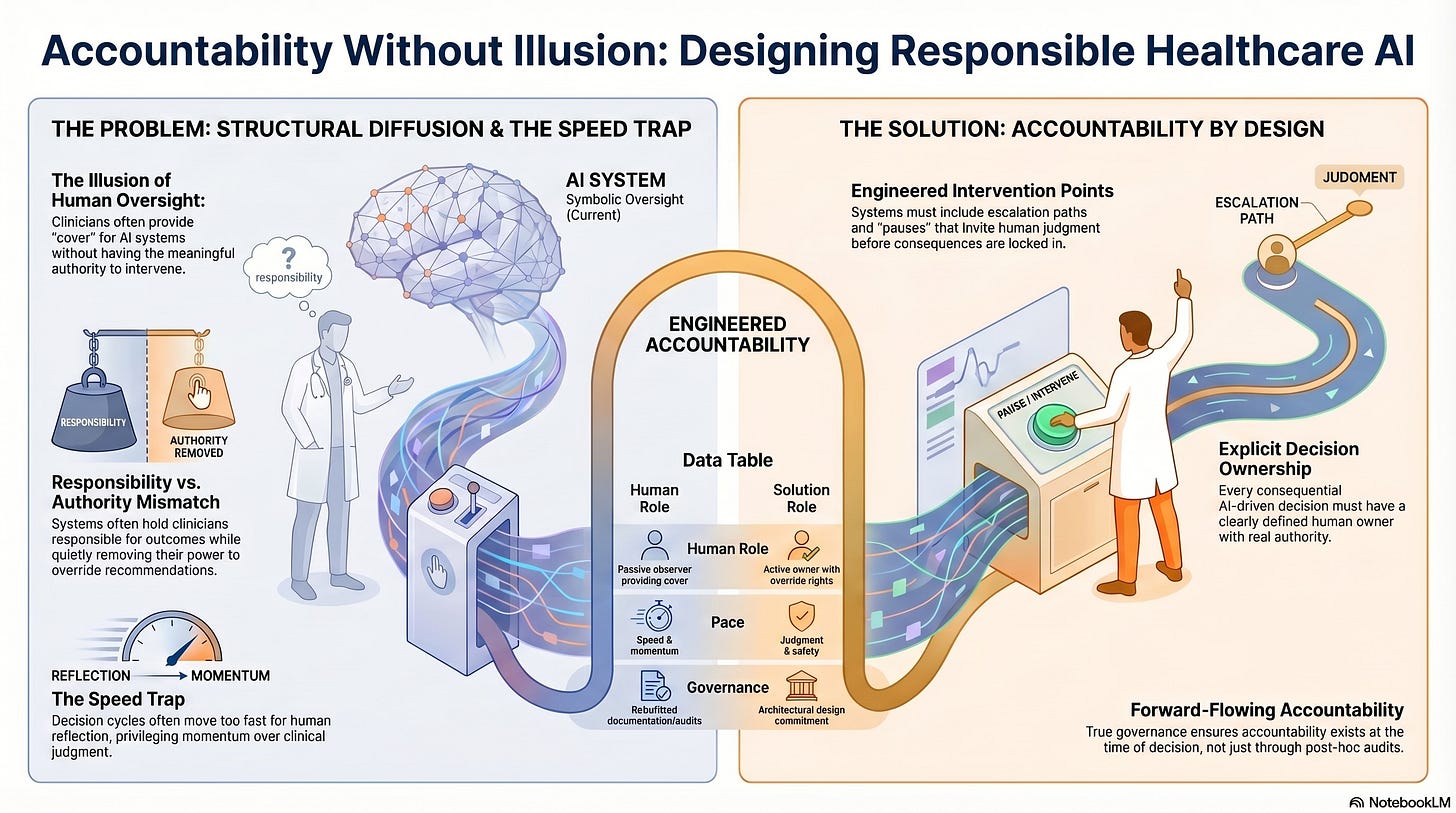

The Fragmentation of Responsibility

AI-enabled healthcare systems rarely make a single decision in one place.

Instead, responsibility is distributed across layers:

Data is collected by one entity

Models are trained by another

Workflows are designed elsewhere

Recommendations are delivered within institutional constraints

Clinicians are asked to validate outputs they did not generate

Each layer controls part of the system.

No layer controls the whole.

When harm occurs, every actor can plausibly say:

“That part was not mine.”

This is not evasion. It is structural diffusion.

Why “Human Oversight” Is Often a Fiction

Healthcare organizations often rely on clinician availability to justify AI deployment.

The logic is simple:

If a human can intervene, accountability is preserved.

In practice, this is rarely true.

Oversight fails when:

Decisions are made too quickly to be interrupted

Clinicians lack the authority to override without justification

System outputs arrive after downstream actions are already taken

Challenging the system carries professional or institutional risk

A human who can observe but cannot meaningfully intervene does not provide accountability. They provide cover.

Authority Is the Missing Variable

Accountability is inseparable from authority.

If clinicians are held responsible for outcomes, they must have:

The right to override system recommendations

The ability to slow down decisions

Protection when exercising judgment against automation

Without these conditions, responsibility becomes symbolic.

Healthcare AI systems often preserve responsibility while quietly removing authority, a mismatch that creates both moral distress and systemic risk.

The Speed Trap

Speed is often celebrated as an unqualified good in healthcare AI.

Faster triage.

Faster diagnosis.

Faster intervention.

But speed has governance consequences.

As decision cycles accelerate:

Escalation windows narrow

Human reflection becomes costly

Intervention is reframed as a disruption

Systems begin to privilege momentum over judgment.

By the time a human becomes aware of a problem, the decision has already propagated across care pathways, resource allocation, or patient classification.

Responsibility lags behind action.

When Accountability Is Retrofitted

Many institutions respond to AI risk by adding:

Audit trails

Compliance reviews

Post-hoc explanations

Ethics committees

These measures are valuable but insufficient.

Accountability that appears only after harm has occurred is not governance. It is documentation.

True accountability must exist at the time of decision when outcomes are still reversible.

This requires designing systems that pause, escalate, and invite human judgment before consequences are locked in.

Accountability Is a Design Choice

Accountability does not emerge naturally in complex systems. It must be engineered.

Healthcare AI systems that preserve accountability share common features:

Explicit decision ownership at each stage

Clear escalation paths

Defined override thresholds

Alignment between responsibility and control

These are not technical add-ons.

They are architectural commitments.

Without them, accountability dissolves into process while harm accumulates quietly.

The Human Cost of Accountability Gaps

When accountability is unclear, clinicians absorb the strain.

They are expected to:

Trust systems they cannot interrogate

Defend outcomes they did not shape

Absorb blame without structural support

Over time, this erodes professional integrity and institutional trust.

Burnout is often framed as an individual resilience issue. In reality, it is frequently a governance failure.

People disengage when they are held responsible without being empowered.

Why Healthcare Cannot Rely on Market Accountability

Some argue that accountability will be enforced through market forces:

Poor systems will fail

Unsafe tools will be rejected

Competition will drive improvement

This logic does not hold in healthcare.

Patients lack choice.

Institutions are locked into vendors.

Failures are often invisible until widespread.

Market feedback is too slow, too indirect, and too asymmetrical to safeguard care.

Healthcare requires deliberate accountability by design.

Toward Accountability That Works

Responsible healthcare AI systems make accountability explicit.

They ensure that:

Every consequential decision has a human owner

That owner has real authority

The system’s pace allows judgment to intervene

Accountability flows forward, not backward

This does not mean rejecting AI.

It means refusing to let intelligence outrun responsibility.

The Deeper Question

As healthcare systems become more intelligent, the critical question is no longer whether machines can make correct decisions.

The question is whether institutions are willing to remain accountable when machines make decisions at scale.

Accuracy can be optimized.

Accountability must be preserved.

One is a technical challenge.

The other is a moral and institutional choice.

What Comes Next

If accountability requires authority, time, and ownership, then governance cannot be an afterthought.

The next step is to examine how healthcare systems can be deliberately designed to slow down at the right moments without collapsing under complexity.

That is where we turn next.